The communications boom is unstoppable. There is no doubt that one of the sectors that is pushing it the most has been video game streaming, which added to the remaining platforms we must be clear that the figures are currently dizzying. For this reason, NVIDIA, driven by its new technologies and architectures, went to work to support all those who cannot have the material and economic means for a professional recording set. That’s where NVIDIA Broadcast was born, but what exactly is it?

According to NVIDIA, the medium where it is currently broadcast the most is Twitch, where the number of broadcasts has risen a spectacular 89% in the last year, while the audience has reached + 56%.

As we well know, the main software used for streaming such as OBS require a large CPU to work, subtracting performance in gaming from the PC in general, since a large amount of resources is required to obtain quality. This affects a normal user in having to choose between quality or performance in their games. With the release of the first RTX this was solved thanks to the encoder integrated in the new GPUs ( NVENC ) and now NVIDIA takes the next step with Broadcast.

NVIDIA Broadcast, feel like a professional streamer

With the audio and video performance and quality problem solved, Huang’s team wanted to go a step further to help any user who carries one of their RTX cards. The webcam and the room we are in create a unique atmosphere, but it can be unprofessional if we have a high audience.

Disburse large amounts of money for a chroma, lights and their spotlights, professional microphones etc … Well, it is something that is not available to everyone, much less minors. This is precisely where NVIDIA Broadcast comes in, since by means of a tremendously powerful software it will help us to improve any streaming from the same place where we have always done it.

To do this, it will transform our room in a virtual and incredible way through the power of AI, but what is it based on?

Three functions that rely on artificial intelligence

NVIDIA has high esteem for the data center and artificial intelligence market, not in vain last year it entered more than the gaming section. For this reason and after the purchase of Mellanox and ARM, it has become the largest company in the world in AI.

NVIDIA Broadcast therefore is based on three very clear sections driven by this very particular AI:

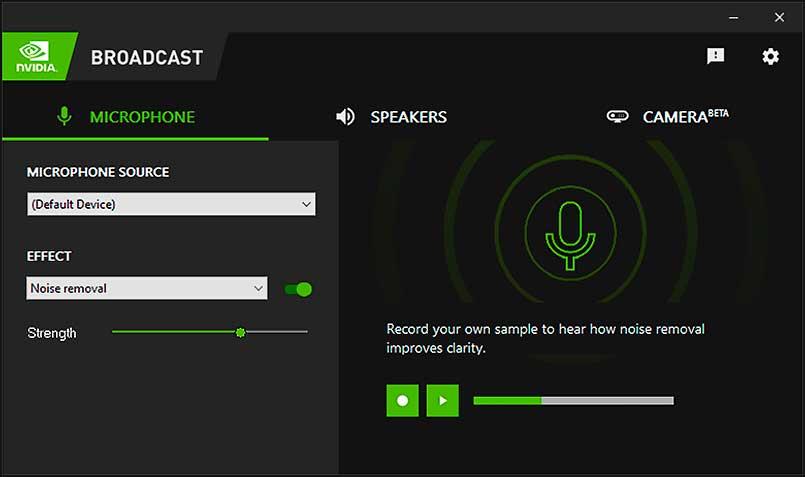

- Noise removal.

- Auto Frame.

- Virtual background.

You don’t have to be a genius to understand what each of them does, but we will also explain them briefly. Noise cancellation is a feature we saw last year that has surprised everyone by how well it works. It is capable of filtering out any noise and making our voices clear even if a plane was taking off next to us, which is simply amazing.

Auto Frame is possibly the most innovative of NVIDIA Broadcast, since it is a technique of tracking our head through AI, that is, it leaves our head in the center of our screen we move it as we move it, even if we move from the chair. It basically does the effect of a cameraman , but without having to buy it and all thanks to AI.

Finally, the virtual fund. We have all made our recordings with our office, study or bedroom in the background, it is something very common especially when we start. A chroma and a complete set of it are worth good money, but now NVIDIA with its AI will eliminate our wallpaper (the one from the camera obviously) allowing it to be replaced with any image, even showing the game we are playing in the background, or allowing a blur of our background to focus attention on us.

What does NVIDIA Broadcast need to work?

It’s a bit of a mystery for now, but it appears NVIDIA is working with top software developers like Twitch, Zoom, Discord, and other undisclosed ones. Although it is not yet released, it is expected that with the arrival of the RTX 3000 in stores, the company will release the driver that will support it together with GeForce Experience, making its driver one more weapon to compete against AMD.

The requirements are really low, but at the same time very exclusive, since the company asks for a GeForce RTX, TITAN RTX or Quadro RTX . This makes sense, since in order to work with AI without losing graphics performance we will need Tensor Cores , and these are only present in first or second generation RTX GPUs.

Currently we can enjoy some of the three features in BETA format such as RTX Voice, Virtual Backgroud or Auto Frame, something that will help NVIDIA to polish them before the arrival of the aforementioned official driver.