We all know Moore’s Law when we talk about processors and their transistors, but as much or more important than this is Koomey’s Law, despite being much less famous and even unknown to many. Do you want to know what Koomey’s Law is ? In this article we explain what it is and why it is important.

Jonathan Koomey is a professor at Standford University, famous for having been able to identify a long-term trend in computer energy efficiency that has been known as Koomey’s Law ever since.

What is Koomey’s Law?

Moore’s Law states that the processing power of processors doubles about every two years (although there are other theories that say it is every 18 months). This law has been widely supported by statistics and there is abundant data: it is an observation almost as true as that the sky is blue.

However, there is a new law in the computing world and it could be even more relevant than the one enunciated by Moore in 1965: Koomey’s Law, considered the equivalent of the 21st century Moore’s Law .

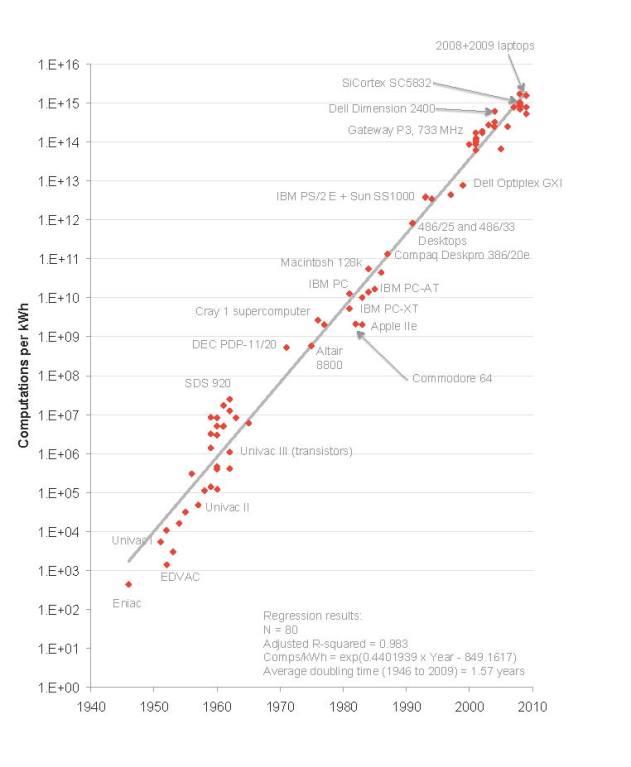

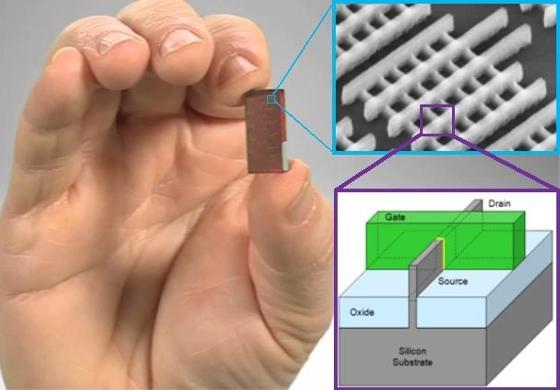

Koomey’s Law describes a trend that dictates the number of computations per joule of dissipated energy, which doubles every 1.57 years. In other words, if Moore’s Law talks about computing power and the number of transistors, Koomey’s tells us that the efficiency of processors and computing devices doubles approximately every 1.57 years .

This describing trend has been remarkably stable since the 1950s (with a deviation of less than 2%) and, in fact, has been even faster than Moore’s Law for some periods.

Why is it so important in these times?

There is no doubt that electronic devices are becoming smaller and more efficient, with lower and lower battery consumption and therefore with greater autonomy. The approach that manufacturers are taking to their products in recent times is precisely geared towards improving energy efficiency rather than improving gross performance, and proof of this is that the new generations of processors are consuming less and less by delivering the same or more performance .

And it is that after all it is precisely what you are looking for: if you increase efficiency you are reducing consumption, which allows you to use more power while consuming the same. Let’s take a practical example: imagine a processor that has a power of 10 and consumes 10. You achieve a processor that has the same power but consumes 5, and then what you can do is put two processors, which in total will have a power of 20 but between the two they will continue consuming 10. Do you understand the concept?

In order to Koomey’s Law

According to the second law of thermodynamics ( “the amount of entropy in the universe tends to increase over time”) and the Landauer principle ( “in any logically irreversible operation that manipulates information, such as erasing a little memory, entropy increases and an associated amount of energy dissipates as heat “), computing cannot continue to become more energy efficient forever .

In 2011, computers already had a computational efficiency of approximately 0.00001%, and assuming that the trend continues to double every 1.57 years as dictated by Koomey’s Law, the Landauer limit will be reached in approximately 2048 , at which time which is calculated that this law will cease to be maintained.

Another theory says that the Landauer principle cannot be applied to reversible computing, but even so the computational efficiency is still limited by the Margolus-Levitin theorem ( “The processing rate cannot be greater than 6 x 10 ^ 33 operations per second for July of energy “) , which would limit the validity of this Law to approximately the year 2130 .

In any case, there is much left for this to happen and, by then, it is likely that new advances have occurred (remember that all manufacturers are actively working to improve efficiency) that allow this law to be kept alive, or even improved.