The use of light to transmit data instead of electricity is not new and has been around for years, although in very limited markets. In this article we will explain what the implementation of photonics in hardware consists of, in which markets it is used and when we will see it implemented in mass devices such as the PC.

In the universe there is nothing faster than light, so the best way to transmit data is through light. Why don’t we use photons to make processors? Well, because it is more expensive to manufacture and they do not scale in terms of size like silicon. However, there is the photonics integrated in the silicon that unites the two worlds. With what applications?

What is photonics in hardware?

Photonics in hardware is nothing more than the use of photons that make up light for the transmission of information. Within photonics there is silicon photonics, which is based on the use of silicon for the transmission of optical signals, which allows its implementation in integrated circuits.

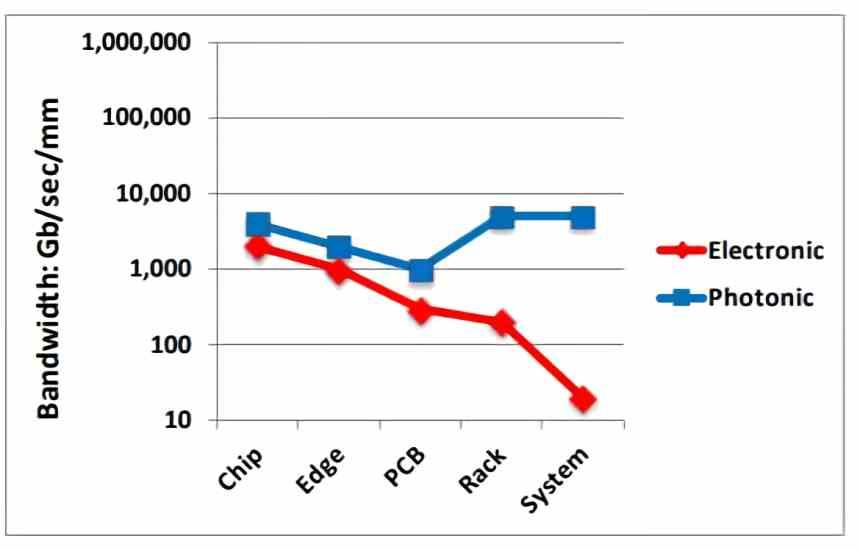

Its use is not to create more powerful processors, but for communication between different chips and therefore in the external interfaces between processors, memories and peripherals. The aim is to reduce the gap in bandwidth, the speed at which data is transmitted, between the processor and memory.

It must be taken into account that the value that dominates when transmitting data is the energy they consume. Precisely the idea of using silicon photonics is to also have an interface that transmits data at a lower cost.

Light-based memory interfaces

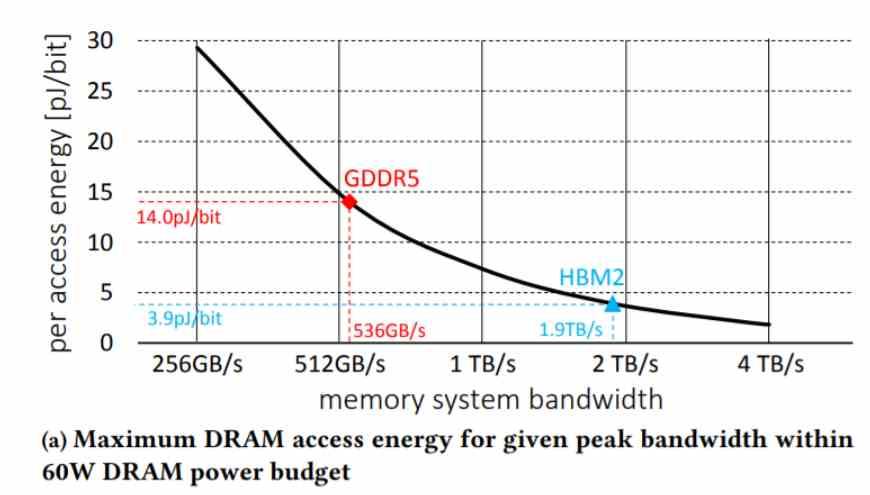

As time goes by, new types of memory are designed to transmit and receive data at lower energy costs. If we use the data we will see how the most efficient types of memory have required new packaging techniques. As is the case with the HBM memory.

There is no doubt that bandwidth needs continue to grow, especially in the era of Big Data where the information that moves is enormous. This means that we need more energy efficient bandwidths. For example, in the world of PostPC devices we will soon see HBM-style interfaces, on the other hand, at the other extreme, in the world of supercomputers, silicon photonics is already considered not as something of the future, but of the present.

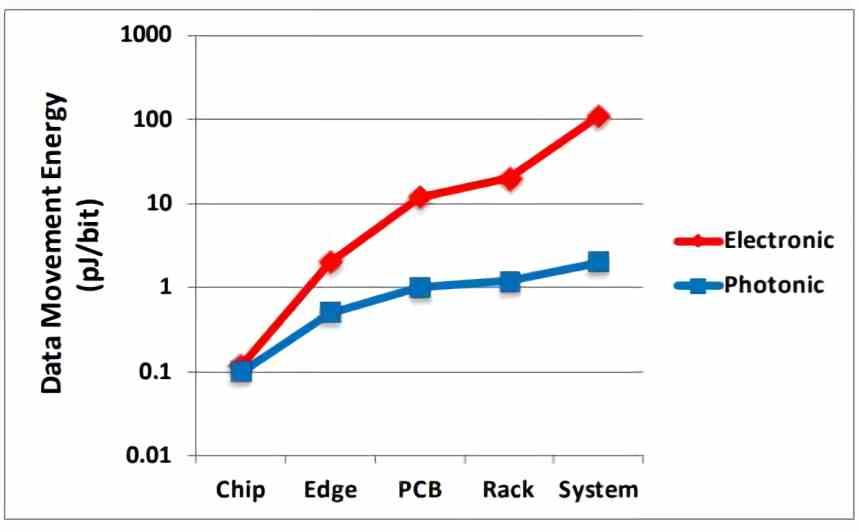

At the level of internal communication on a chip, it does not offer any advantage in terms of consumption for data transmission. It is when we move one communication interface away from another when we see that the efficiency of the use of interfaces based on photonics begins to make sense due to the lower consumption of bandwidth, allowing data transfer for <1 peak Joule per transmitted bit.

On the other hand, the bandwidth degrades in a conventional interface as there is more distance from the processor. This means that memories beyond RAM in the memory hierarchy also benefit from these types of interfaces. Imagine for example an SSD with a reading speed typical of a DDR4 RAM.

There is no Moore’s law for I / O pins

They continually tell us about how Moore’s Law allows smaller chips to be made. Well, that’s true except the external communication pins don’t scale down. In other words, the external interfaces always occupy the same thing, thereby affecting the size of a chip if you want a specific bandwidth or forcing the use of more complex packaging systems that allow a greater number of pins.

The concept is easy to understand, power consumption grows exponentially if the clock speed is high, a high clock speed means high voltage, and the growth in power consumption is high. The only way is to increase the number of pins, but that forces complex constructions to mass build such as 2.5D and 3D ICs.

This is where photonics comes in, as a solution to the problem of memory interfaces and their scaling in order to obtain higher bandwidths without increasing the average consumption in data transfer.

Where is photonics used today?

Today, silicon photonics is used in data centers to connect systems that are widely separated.

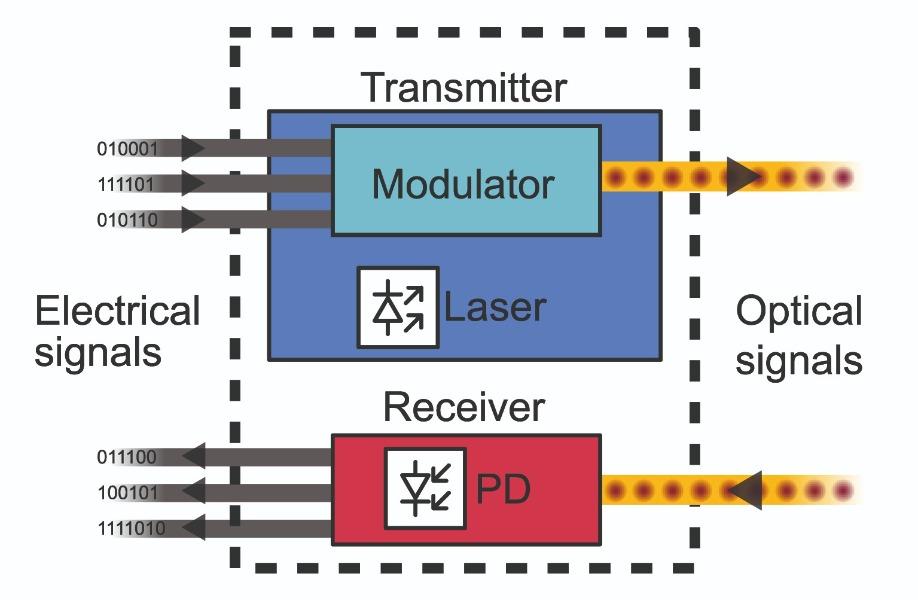

Through optical transceivers in each system, which can transmit and receive signals. Their function is simple, they convert electrical signals into optical signals that travel through the fiber optic cables that connect the different cabinets that make up the data center. When the transceiver receives the data then it converts it into an electrical signal that conventional processors and memories can process and store respectively.

Such optical transceivers have the ability to transmit and receive a large volume of data. Its main problem? They are expensive to manufacture and even more so on a commercial scale. That is why we have them in supercomputers today and not in PCs in our homes.

Another market where silicon photonics are used is medical imaging for diagnostics. In fact, light is used in medical diagnosis. Especially in microscopes and spectroscopes. Through the use of light, cells can be counted and visualized, a DNA sequence determined. Therefore, the photonics integrated in the silicon allows the creation of integrated circuits designed for medical diagnosis that at the same time have the ability to process this data at high speed.

With the photonics built into the silicon, an ordinary doctor will be able to study a tissue, a blood sample, etc. Without having to opt for laboratories with high cost equipment. Since this technology will allow the creation of intelligent microscopes in the following years, with an integrated processor capable of obtaining information from the images, processing it and sending it to a PC through a USB interface if necessary.

Are we going to see it on the PC?

Integrating an optical transceiver to replace the memory interface has advantages in terms of consumption and bandwidth. The disadvantage? We find it in the cost when implementing these in a processor.

Where we are going to see is in concentrators in charge of receiving and distributing several high-bandwidth signals at the same time. These concentrators are located in the central part of a chiplet-based system where the distance between the chips is greatest. With the use of optical interfaces, it is possible to solve the problem of consumption in the interfaces and the degradation of bandwidth due to distance.

This is especially important in systems that require multiple GPUs to communicate on a large scale. Although for the moment we will first see the move to vertical interfaces type 2.5DIC and 3DIC as solutions before the arrival of large-scale photonics.