The NVMe 1.2 specification introduced a new feature called Host Memory Buffer or HMB (not to be confused with HBM graphics memory), with the promise of dramatically improving the performance of PCIe NVMe SSDs. In this article we explain what it is, how it works, and how it manages to improve the performance of SSDs that have this ability.

Most modern SSDs include a built-in DRAM memory chip, generally with a 1GB DRAM ratio for every 1TB of storage . This RAM is generally dedicated to keeping track of where each logical block of information stored in the NAND memory – information that changes in each write cycle – is physically located, and is consulted each time a read operation is performed.

The standard DRAM to NAND ratio we have discussed provides enough RAM to the SSD controller to use a very agile quick lookup table, rather than using more complex data structures that would be significantly slower. This dramatically reduces the work that the SSD controller has to do to perform input and output operations, and is the key to consistent performance.

Non-DRAM SSDs can be quite cheaper and even smaller, but since they can only store data index tables in internal flash memory their performance is quite penalized. In the worst case, the reading latency can be doubled since each reading operation will require one operation to know where the physical data is, and another one to read the data itself.

What is the Host Memory Buffer?

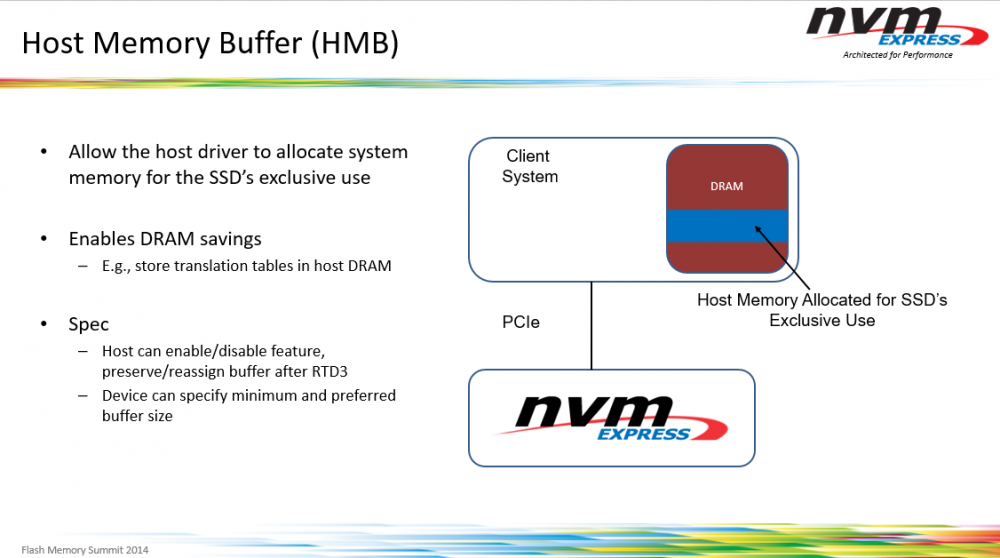

As we said at the beginning, the NVMe 1.2 specification introduced this new ability called Host Memory Buffer or HMB. This feature takes advantage of the DMA capabilities of the PCI-Express interface to allow the SSD to use a portion of the DRAM memory from the system CPU rather than requiring the SSD to come with its own DRAM.

In other words, the SSD uses a small portion of the system’s RAM to perform these operations, and since it is not designed to “replace” the internal DRAM of the SSDs but to complement it, it will not actually remove much RAM from the system, just magnitudes of the order of tens (less than 100 MB), more than enough for what you need.

It is true that access to DRAM through PCIe is much slower than accessing a DRAM chip that is in the device itself, but even so, performance is improved significantly with respect to reading the information from the SSD flash memory.

How does HMB affect performance?

As we have explained before, the best option for better performance is that the SSD has its own DRAM, since access will be much faster. The second option is through the Host Memory Buffer, which works through PCIe to the system RAM, and the worst option would be to have none of this and have to use the cache memory SSD’s own flash memory.

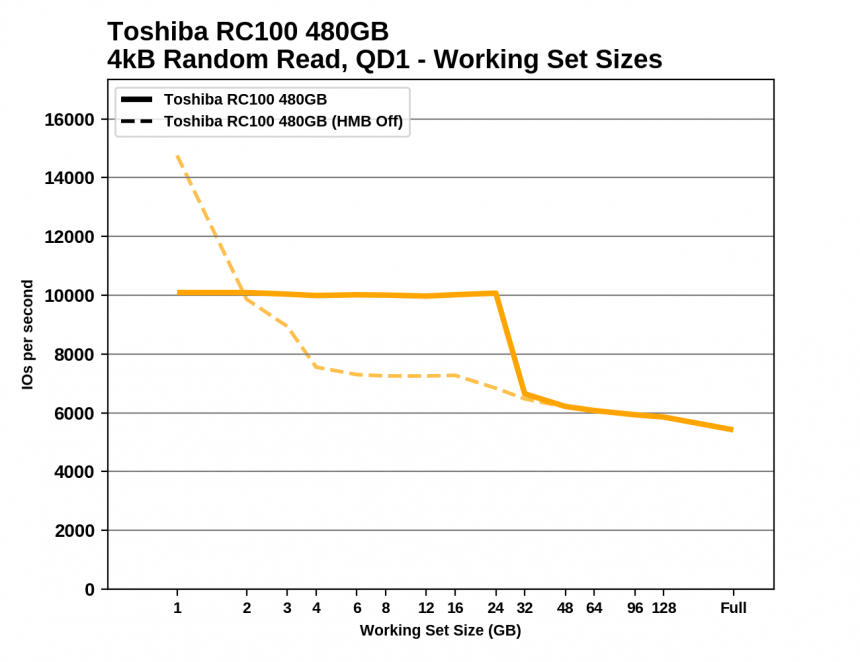

The effects of the HMB cache can be clearly seen by measuring the random read performance of an SSD while increasing the workload (the amount of data being actively accessed simultaneously).

It can be clearly seen that until the workload reaches 24 GB, the performance of the SSD remains very, very stable, and only starts to drop from that figure. However, with HMB disabled, performance is gradually decreasing and more and more.