One of the most important points when designing a new processor, whatever its function, is the energy consumption it has and therefore how it is organized to make the most of the energy supplied to it. This is where what is called the Power Delivery Network or PDN comes in. What is it and why is it important in the design of a new processor?

There is a myth, ingrained in the minds of many people, that the power consumption of a processor is something that manufacturers suddenly discover when it jumps from the design phase to the pre-production phase. The reality is quite different, after all, a processor is nothing more than an electrical circuit on a very, very small scale. So we are talking about how the way electrons move through the circuit is crucial and is part of the initial processor design right from the start.

How is the power consumption of any processor measured?

We cannot know what the exact energy consumption of a processor is, since a series of physical phenomena can occur that vary the result and are only known once the design has been manufactured and therefore it goes from the conceptual to the real. So an approximation is made, which helps engineers to get an approximate idea of what the energy consumption will be.

The general formula is as follows:

Power (watts) = number of logic gates * capacitance * clock frequency * voltage squared.

But this is a very generic estimate , within the same processor designers can make use of different types of logic gates even within the same type and with different levels of consumption. But especially it depends on the way in which the different logic gates that make up the different elements of the processor are connected to each other. This is where we enter the Power Delivery Network or PDN. Which is part of the design of each processor and refers to how power is distributed between the different logic gates.

What is the Power Delivery Network?

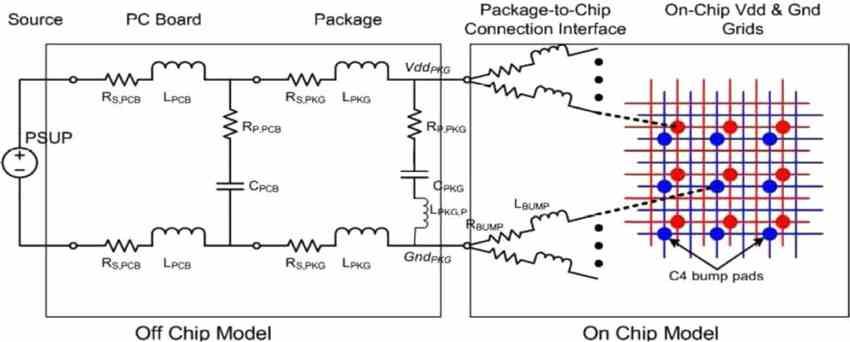

During the design of a processor, the point is reached where it is necessary to organize the different blocks that compose it and interconnect them with each other for communication. But each element requires a flow of electrical current to function. For what happens when a building is made in which the distribution of the electrical network has to be designed, the same happens when a processor is designed.

In a CPU, what consumes the most are the interconnections, today between the internal and external interconnections in the interior, 3/4 of the internal consumption of the same goes away and it is one of the biggest challenges for engineers today. What makes it a challenge when creating new processors with more and more cores where not only the communication interface, but also when feeding the different blocks of the processor.

It does not matter if we are in front of the 1 W processor of a smartphone, the 45 W of a high-end gaming laptop or the 200 W of a server processor. All of them have been designed with a specific Power Delivery Network. Which implies that each and every one of the hundreds of millions, but billions, has to operate at the proper voltage. If, for example, the voltage were too low, the data could vary and the processor would not only operate with incorrect data, but could also affect the stability of the processor.

What are the current challenges when designing a PDN?

As time has passed, the voltage at which both the processors and the memories operate has been dropping. Initially, the designs of a complete computer were made using several interconnected chips under the TTL interface, transistor-to-transistor logic, with a voltage of 5 V. Currently with the use of FinFet transistors at 7 nm we move around 0.5 V and 1 V. Which results in a challenge for system designers.

In a digital processor, the signal is treated in a binary way and therefore the voltage fluctuates between two voltage values, one active and the other with the processor off. Thanks to this, the values are separated enough so that the ups and downs of the same do not end up causing the signal sent to be confused. However, with the increasingly low voltage comes a problem and that is that in order to feed the most powerful processors with enough power then we have to increase the amount of amps that feed it. Since the consumption of any electronic circuit is proportional to the square of its voltage, most designers have dedicated themselves to keeping it as low as possible within the specification.

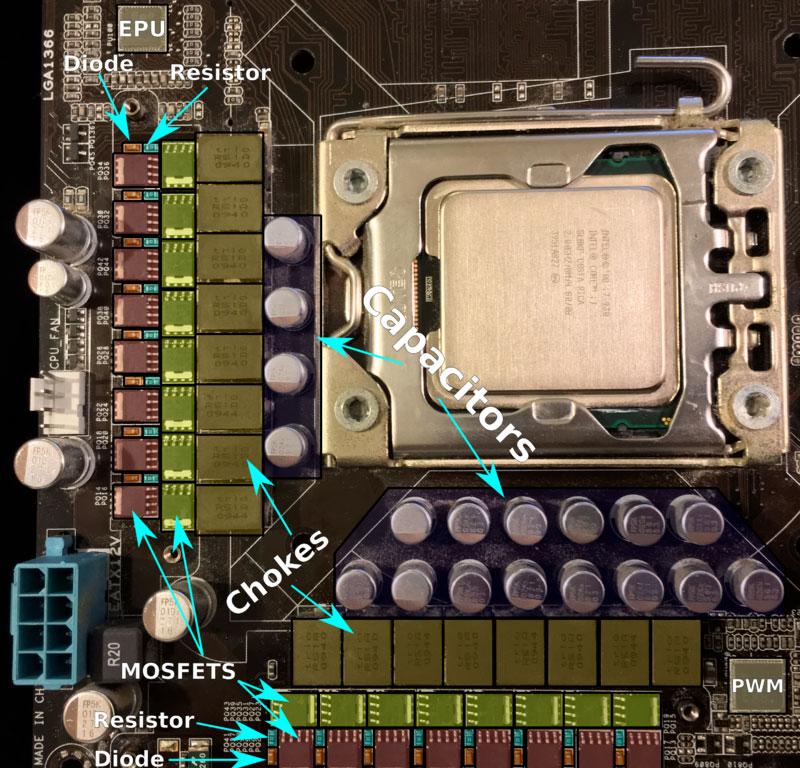

The low voltage, high amperage paradigm is challenging because more cables are required to carry the greater amount of current required. Making the Power Delivery Network more complicated than it should be, not only within the processor itself, but also externally. Where the organization of the VRMs on the motherboard or expansion card is important in the complex electrical system.

Power Delivery Networks today and in the future

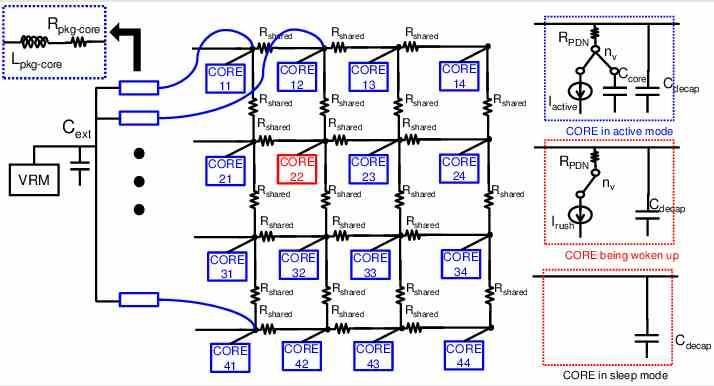

In recent years there have been measures to save energy and increase efficiency in processors. These include the use of Power Delivery Networks built in a modular way. Which are designed so that parts of the processor turn off completely when they are not used to use less power. Nor can we forget the mechanisms that allow the voltage of a processor to be varied dynamically in order to fluctuate the clock speed and energy consumption.

A processor operating at 1 GHz with a voltage of 1.2 V will perform the same as a 0.6 V processor at the same clock speed, but it will consume 4 times more for the same work. That is why many modern CPUs and GPUs have their Power Delivery Networks designed to lower the voltage to the minimum necessary when the clock speed is low. This increases the level of complexity of the processor, since it is necessary to design the processor so that it can work with different voltages in its design.

Processors today are made up of billions of transistors that form hundreds of millions of logic gates and with them tens of millions of combinational and sequential systems. The design of the PDN has therefore become extremely more complex over time and if we add what we have mentioned a few lines ago, it therefore becomes one of the most important parts in the design of any processor.

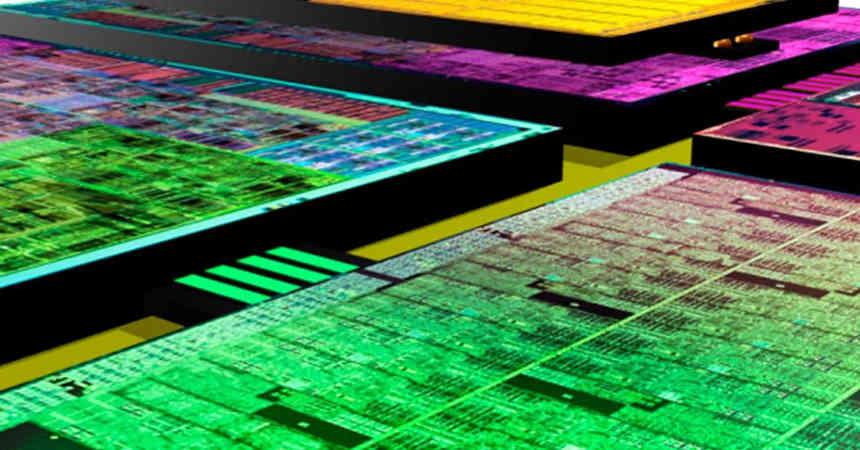

Things get complicated with chiplets

The adoption of chiplets means that the Power Delivery Network is not only integrated in each of the chiplets, but also in the interposer that intercommunicates them with each other. Taking into account that intercom is the most energy consuming in a monolithic processor and the wiring in a chiplet system increases its length between the different chiplets, then it turns out that the biggest challenge is in the distribution of the power in these configurations.

The solution? It has come through the use of vertical interconnections, which are much shorter and are in greater quantity. The latter allows them to operate at a lower clock speed and therefore at a lower voltage. Something that is crucial in order to move the enormous amount of data that applications such as artificial intelligence or graphics rendering require. But at the same time this poses a series of problems in the design of interposers, which marketing departments do not usually talk about in public, but which for engineers becomes a huge headache.

In any case, in the chiplet concept, despite the fact that we physically have chip differences, actually its PDN is designed as if it were a single processor.