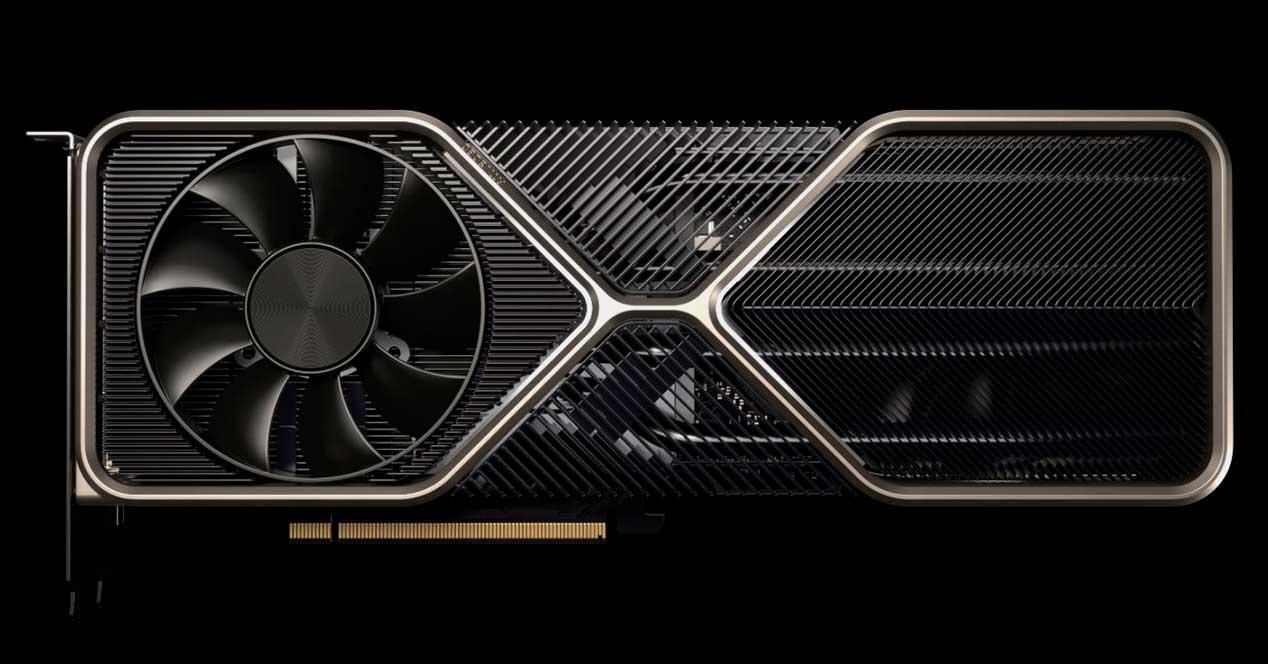

It is the great battle that everyone wants to see, they are the most sought after high-end GPUs for their performance / price ratio, but performance apart in this fight of the titans that has the RTX 3080 vs RX 6800 as protagonists, who has done best job? Which of the two has better architecture? Let’s get right to the heart of both graphics cards to discern data in hand which has more potential.

The actual performance is very good to know, because it is the truly differentiating factor together with the price that determines where the sales of one or another GPU are going to go. But under the hood there is some interesting work on the part of both brands that should be analyzed, since sometimes the muscle does not correspond to the performance, as well as the price. Who has best designed your GPU?

NVIDIA RTX 3080 vs AMD RX 6800: architecture duel

As it cannot be otherwise, we are going to buy two graphics chips with different architectures, different features and different lithographic processes.

As many of you may know, the AMD RX 6800 is based on a Navi 21 XL chip, which is built around AMD’s new RDNA 2.0 core architecture. NVIDIA instead is based on its Ampere architecture and gives life to its GPU based on a GA102-200-KD-A1 chip for its Founders Edition, where the manufacturer is in this case Samsung under an 8 nm lithographic process, which which has given a chip with an area of 628 mm2 , achieving 28.3 billion transistors.

On the other hand, the AMD GPU is manufactured by TSMC with a lithographic process at 7 nm , so the density of transistors is higher than in the case of the Samsung chip. Not in vain do they manage to fit 26.8 billion transistors in just 520 mm2 of area of their chip.

What about shading and specific units? Well, here comes the first data that can make us doubt … And it is that continuing with the RX 6800 said GPU obtains 3840 Shaders, 240 TMUs, 96 ROPs and 60 RT Cores , where in its caches it has 32 KB for each WGP (60 CU ), 128 KB for each CU, 4 MB shared L2 and as L3 128 MB.

NVIDIA “spoofs” its data with FP32 drives, thus “doubling” the number of Shaders when they are actually half. Thus, they claim to have 8,704 units, when they are really 4,352 complete and optimal Shaders , which means including 272 TMUs, 96 ROPs and 68 RT Cores , without forgetting the units for IA, the Tensor Cores ( 272 ).

All in 68 SMs, with 128 KB for each SM of L1 and 5 MB of L2 . Comparing the data we see that AMD has fewer Shaders, fewer TMUs, the same ROPs and fewer RT cores, but instead it has a much more complete and advanced cache hierarchy, and above all with a larger total size.

Frequencies and theoretical performance are also in question

The RTX 3080 only gets two enabled frequencies: base frequency and boost frequency, these being 1440 MHz and 1710 MHz respectively, while, on the other hand, the RX 6800 obtains three different frequencies, base (1700 MHz), gaming (1815 MHz). ) and Boost (2105 MHz).

This is important to understand how the theoretical performance data is going to be represented, since it is the multiplication of the frequency and the shaders, giving a result in FP32 of 16.16 TFLOPS for the AMD GPU and 29.77 TFLOPS for NVIDIA’s, but as we know, the figure has to be divided in half, so we are talking about 14,885 TFLOPS , both theoretical.

As we always say, this is just an unrepresentative performance measure, but even so, here AMD takes the cat to water for its difference in MHz in Boost, although later it is not able to keep it stable, while in NVIDIA the Boost frequency It is never declared, but it is necessary to add the one that is achieved with the algorithm depending on the quality of the ASIC, which means more than 100 MHz on average, but that is not reflected by the specifications as such.

VRAM performance is also defining

Now let’s talk about the VRAM of both cards. The RTX 3080 has been in the eye of the hurricane for including “only” 10 GB, although it is built under the new GDDR6X memory architecture with PAM4, which means obtaining a speed of 1188 MHz real (2376 MHz or 19 Gbps effective)

All with a 320-bit bus, which results in a bandwidth of 760 GB / s . What can the AMD RX 6800 do here? Well, first of all we talk about 16 GB of VRAM , but GDDR6 , where it also has a 256-bit bus, which together with its speed of 2000 MHz or 16 Gbps gives us a bandwidth of 512 GB / s.

Therefore, NVIDIA has a considerable advantage here, as it achieves no less than 48.43% more bandwidth, which is partly clouded by the 128 MB of L3 of the AMD GPU, something that helps a lot for specific tessellation tasks, for example.

RTX 3080 vs RX 6800: conclusions and real performance

RTX 3080 vs RX 6800, a duel that based on the rest of the specifications such as consumption ( 250 watts for the RX 6800 and 320 watts for the RTX 3080) the reality is that both GPUs are very even in performance and somewhat further in price.

AMD’s GPU costs about $ 579 MSRP, while NVIDIA’s is $ 699 , which is 20.72% GAP, but the actual performance difference is around 17-18% roughly.

Not counting the difference in VRAM, which without scoring a point and followed in performance, assures us that in the future the AMD GPU will not have so many problems in this section. The problem is that, as usual, we will always run out of “muscle” rather than VRAM as such. This, added to the fact that all current engines and the vast majority of games use RAM Caché for their textures and the last inclusion of the BAR to take better advantage of the VRAM of the GPUs, this factor is not so limiting.

Of course these cases are under a resolution such as 4K or UWHD, since in 2K or 1080p the textures weigh much less and we will be left over in VRAM in both cases with any game. So really the graphical memory argument is not such, since practically no game manages to capture the 10 GB of the RTX 3080 and although the trend is upward in this section, if it is not to play at 4K and high FPS, the option more logical and better balanced is that of NVIDIA, although it is somewhat more expensive.

The difference of 120 euros in the average price ( Founder vs Founder ) is justified by its higher performance and by another section that is not usually named: better drivers. NVIDIA continues to have better driver support, more mature, with fewer bugs and very useful, which is a more than interesting difference that you always have to pay for, not in vain they have an army of engineers working.