The elephant in the room that is not often talked about is that there really are no NVIDIA or AMD GPUs ready for 4K, as there are a number of physical limitations that prevent creating GPUs with a specification that meets those requirements. We explain what these reasons are and how they have influenced the design of the latest generation of NVIDIA GPUs.

Despite the fact that 4K resolution is something that many of us take for granted, we find that in terms of gaming performance, it does not seem that even the most powerful graphics cards that we can buy can with that resolution with sufficient ease, that is, maintaining the 60 frames per second stably.

No NVIDIA or AMD GPU is really 4K ready

4K resolution, also called Ultra HD or UHD, consists of an image buffer of 3840 x 2160 pixels, this is four times the number of pixels than 1080p or Full HD resolution, which uses a 1920 x 1080 image buffer. pixels.

This means that not only is a four times greater number of operations required, but also a bandwidth that is four times that of 1080P is required, since the amount of data is four times.

If we do a quick analysis of the different ranges of graphics cards, both from AMD and NVIDIA we will see how those that are aimed at 4K do not meet those requirements.

Comparing NVIDIA’s RTX 3000 range as an example

NVIDIA touts the newly released RTX 3060 Ti as an ideal card to run next-gen games at 1080P, we’re going to compare it spec for spec with the RTX 3090

| NVIDIA RTX 3060 TI | NVIDIA RTX 3090 FE | Differential | |

|---|---|---|---|

| Mhz (Boost) | 1665 | 1695 | |

| YE | 38 | 82 | |

| CUDA cores (FP32) | 4864 | 10496 | 2.2X |

| TFLOPS (FP32) | 16.2 | 35.28 | |

| Texture Units | 152 | 328 | |

| Giga-Texels | 253.08 | 555.96 | 2.2X |

| ROPS | 80 | 112 | |

| Giga-Pixels | 133.2 | 189.84 | 1.43X |

| BUS VRAM (bits ( | 256 | 384 | |

| Gbps | 14 | 19.5 | |

| Total VRAM (GB / s) | 448 | 936 | 2.09X |

As you can see, the RTX 3090 despite being sold as a 4K ready card does not quadruple the performance of the RTX 3060 Ti, but simply double it.

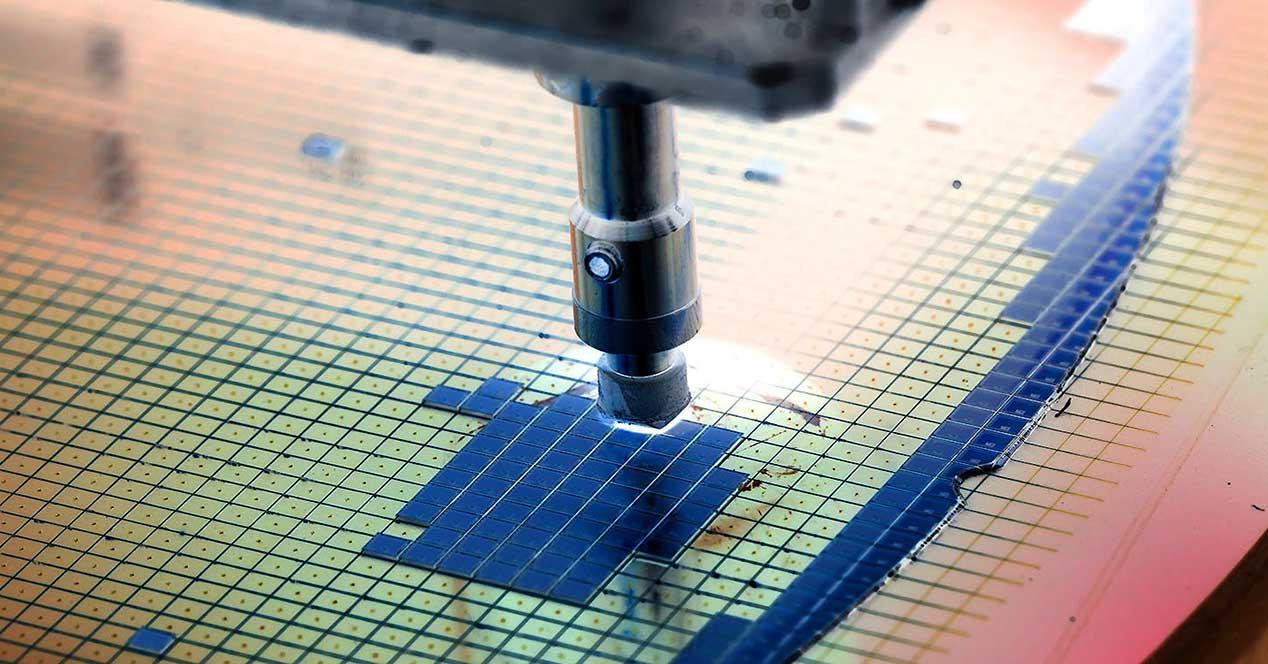

The reason? It’s simple, a GPU that would quadruple the number of units of the RTX 3060 Ti would be so large that it would be almost impossible to manufacture, since it would exceed the limit of the allowable grid.

The other issue is the bandwidth, although the GDDR6X is very fast, an extremely wide configuration would be necessary, which would not only increase the price of the graphics card but also increase consumption.

DLSS is NVIDIA’s solution

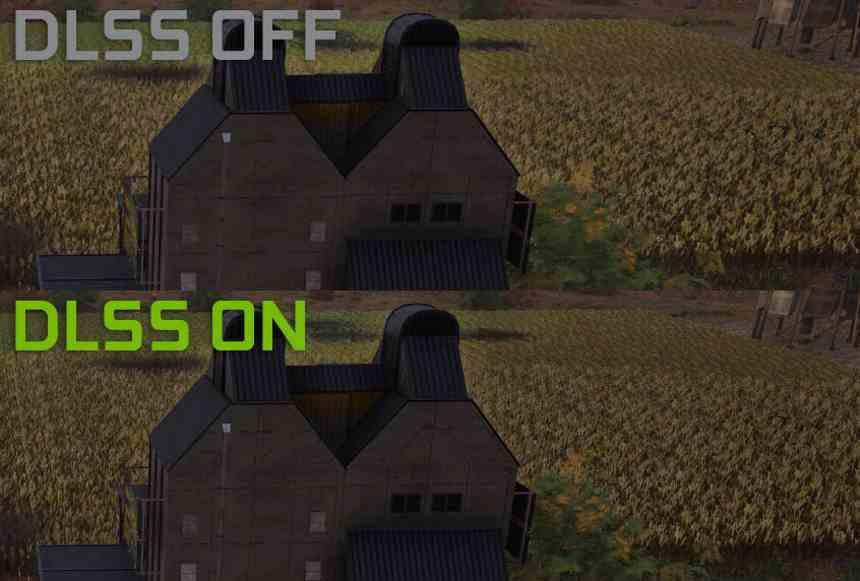

The idea of NVIDIA has been the DLSS, which consists of rendering the scene at a lower resolution and then in a period of time of at most 2.5 milliseconds to scale the image to a higher resolution through an artificial intelligence algorithm.

Due to the fact that there is a very short period of time to perform this operation, a very high computing power is required, luckily the image buffer is stored using very low precision data, as it is stored under the RGB components.

Tensor Cores, due to the large number of ALUs that they have and that operate under 16-bit precision downwards, are ideal for performing these scaling algorithms.

The handicap of this type of solution is that many times they do not obtain a correct and ideal image, that is why games with AI algorithms to increase resolution are counted on the fingers of one hand, since AI does not always generates the correct pixels and requires continuous training and long time to achieve it.

Resolution vs image quality

Resolution is nothing more than the number of pixels on the screen, image quality is the complexity of the image, and the more complex it is, the more operations per pixel it needs and therefore much more power.

What has happened in recent years is that there are many factors pushing the performance of graphics cards and resolution is one of them, but we cannot forget that there is also the case of Ray Tracing that improves the image quality of the games.

Today not only do we find that contemporary GPUs do not have all the power for 4K but if we talk about Ray Tracing they are far from having a performance with face and eyes.

Will 5nm be the key to 4K on AMD and NVIDIA GPUs?

The latest rumors, not confirmed by NVIDIA, tell us of a version of the current GeForce Ampere under 5 nm with the code name “Lovelace”, so we would have a jump like the one from the 900 series to the 1000 series. On the other hand we know that RDNA 3 will appear very possibly under the 5 nm node of TSMC.

Will we have to wait for those cards to get good 4K performance? The main handicap is still memory, GPUs are designed to work with a Bytes per FLOP ratio which is the amount of bandwidth per average computing power, so they will scale according to what the VRAM allows them to scale.

Without adequate memory, which provides sufficiently high bandwidth under acceptable consumption, GPUs will continue to be limited.