In today’s era, semiconductor technology process identification is measured in nm (nanometers), and has become little more than a crude marketing strategy that does not truly indicate the process used. For this reason, the developers intend to change the way of measuring the nm in the wafers, and we tell you how it will be done in the future.

Not so long ago, manufacturing processes could be compared simply based on the density of transistors (i.e. 300nm, 32nm, 14nm, etc.), but more recent advances in manufacturing technologies have caused this it has lost all its meaning when it comes to describing the actual density of the manufacturing process.

Why change the way of measuring nm?

The way in which the manufacturers measured this density of the semiconductors used to refer directly to the minimum length of the gates of the transistors used in the process, that is, for example at 300 nm the minimum length of the gates of the transistors was precisely 300 nanometers. The problem is that for a while now this has not been happening, and we find a practical example with Intel , who assures that its lithographic process at 10 nanometers is comparable to TSMC’s 7 nanometers.

This has brought a number of difficulties under the arm for stakeholders to obtain meaningful information on that particular semiconductor metric.

How nanometers will be measured in the future

Now, a team of researchers has addressed this problem by suggesting a different way of expressing semiconductor manufacturing capacity. Its intention is to ” measure progress in future generations of semiconductor technologies comprehensively, taking into account progress in logic, memory, and packaging / integration technologies simultaneously .”

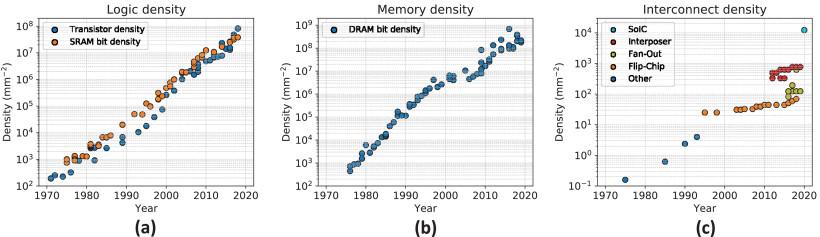

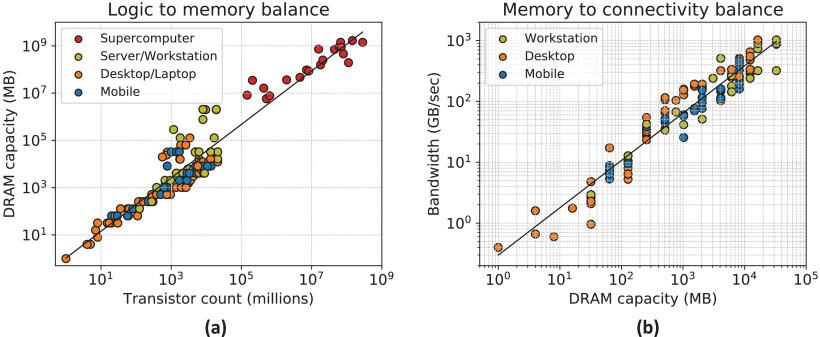

As such, the proposed density metric follows the [DL, DM, DC] philosophy, where DL is the density of logical transistors (in quantity per mm²), DM is the bit density of main memory (DRAM off-chip density , measured in quantity per mm²), and finally DC is the density of the connections between the main memory and the logic (also measured in quantity per mm²).

The researchers say this system will describe the current density of semiconductor technology such as [38M, 383M, 12K].

The researchers include metrics like DM and DC because today’s computer systems rely heavily on off-chip memory density, and actual logic / memory performance and I / O is becoming increasingly important in all kinds of scenarios (especially as performance increases).

Additionally, researchers have observed a comparable increase in logical transistor density and DRAM bit density, adding to this new density metric. Recall that these values are supposed to represent the maximum effective capacity of any given manufacturing process, meaning that a next-generation process from Intel could include the maximum density of transistors expressed as the result of an equation as simple as the total number of transistors divided by the zone matrix ; The maximum RAM memory bit density that can be combined with this manufacturing process chip.

Considering that the current naming convention landscape for manufacturing process density has too many “gaps”, this way of measuring nm really seems quite sensible, as it would force manufacturers to take a “standard” that it would represent reality, and not a marketing formula to sell more.

They do, however, have some leeway for their marketing wranks to describe the processes however they want, but they must also provide exact density metrics for their manufacturing processes. And since it refers to the maximum densities that the process can support (when it comes to transistor logic and memory connection logic densities), it ensures that we consumers can see the semiconductor landscape in a clearer picture. and, above all, realistic.