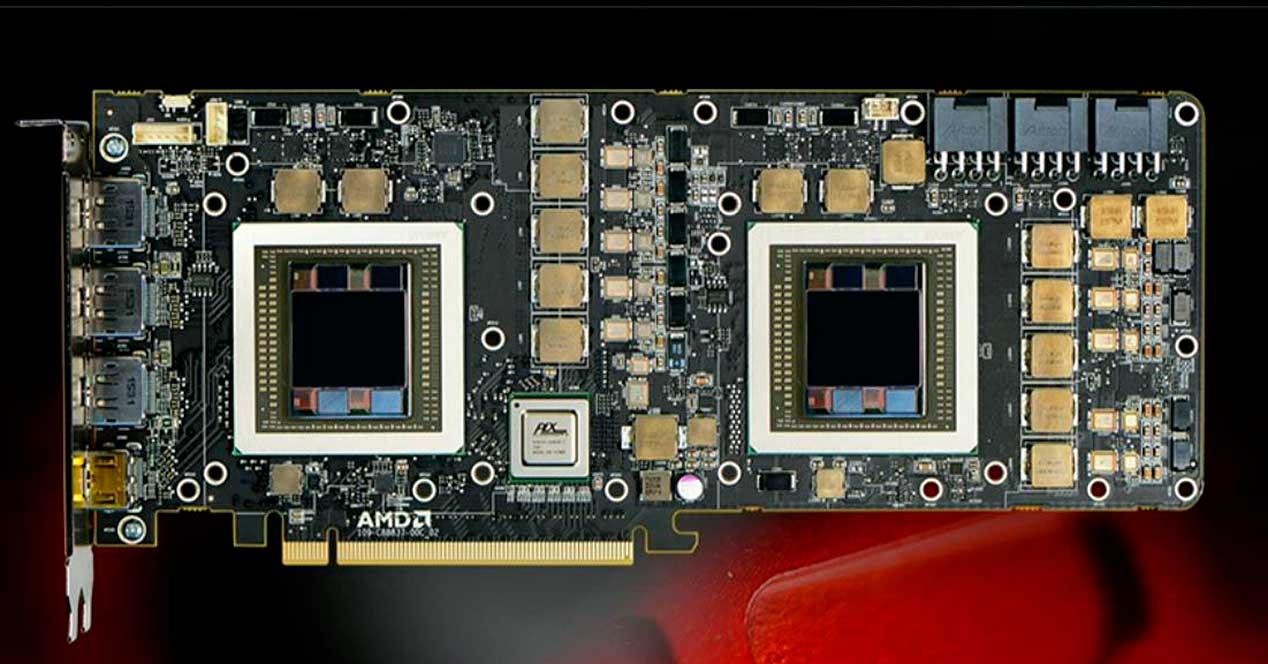

If you have observed the market in recent years, you may have seen how the configurations of a graphics card with two GPUs are no longer offered with the new architectures by large manufacturers such as AMD and NVIDIA. What are the reasons that have led the two companies to no longer bet on these dual GPU configurations?

For some time now we have stopped seeing dual graphics cards in stores, which were in the highest range of consumer ranges.

A dual graphics card works the same as having two separate graphics cards, but sharing the same PCI Express bus between the two, which leads to limitations in terms of the power available for both GPUs as well as communication with the CPU. The most logical explanation for its disappearance is that manufacturers make less money selling a dual card than not two simple cards, apart from the fact that dual cards, due to their limited market, end up being a stock problem if those graphics cards are not sold.

While in the case of pulling graphics cards with a single GPU connected to each other through technologies such as SLI or Crossfire it is more profitable and more appropriate when it comes to managing the stock. But the technical motivations are somewhat more complex and have to do with how home software and especially video games use GPUs.

CPU-GPU communication and how it affects dual GPUs

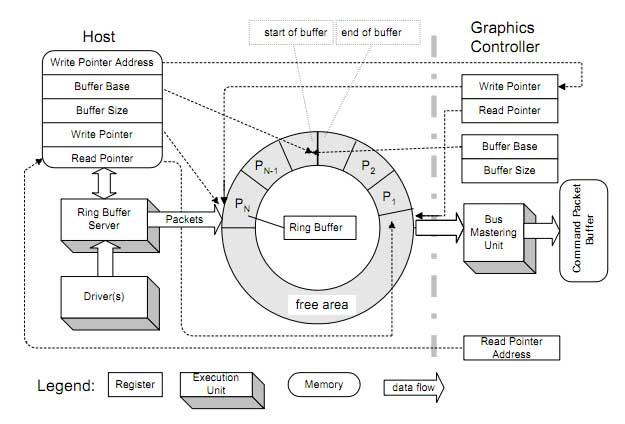

At the beginning of each frame of a video game the CPU calculates the position of the scene of each object in it, as well as the interactions, collision detection, thereby creating an ordered list of things to do called “Command List”, which it is written in a part of the main RAM. The GPU then through a DMA unit that allows it to read the main RAM of the system, not to be confused with that of the graphics, it reads that list as if it were a ring. In other words, what the GPU does is read the same memory addresses that will include said list or lists in a loop.

All GPUs have a small processor called “Command Processor”, which reads that part of the system memory where there is a list of commands that have been previously written by the CPU and that indicate what the GPU has. to do to draw the current frame. This unit is in the central part of the GPU regardless of the manufacturer and the architecture of the same.

Similar to the work of a conductor of an orchestra, the command processor is located in the central part of the chip regardless of the architecture because it is the piece in charge of directing the action of the different GPUs and that the data circulates correctly through the different units. We can have a Dual GPU assigning each one of them to a different screen but the general use in the domestic market has always been to combine two GPUs to render the same scene much faster and / or more detailed.

How do Dual GPUs render? Split Frame Rendering vs Alternative Frame Rendering

The idea of having a Dual GPU is none other than combining the power of both GPUs to render the same scene and they can collaborate collaboratively, but for this both GPUs have to coordinate with each other to render the scene and for this there are generally two methods

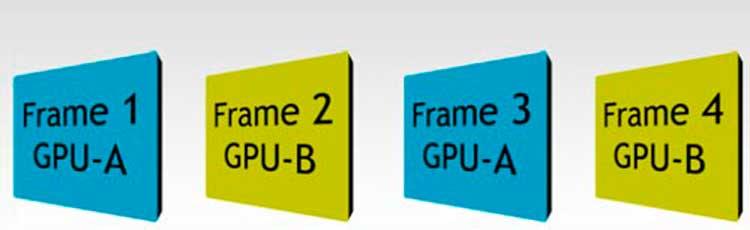

- Each GPU works in an interleaved manner, where each one of them handles a frame and therefore a different screen list. This is called Alternate Frame Rendering.

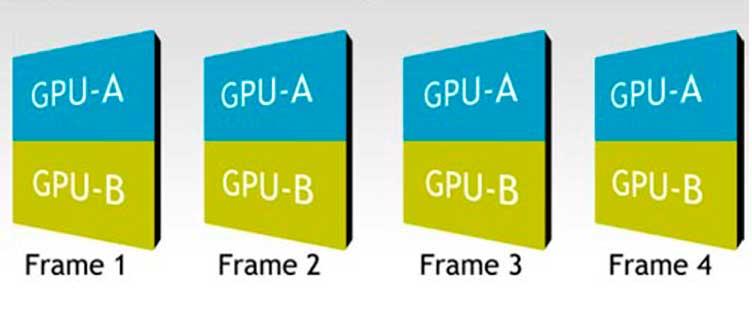

- Collaborating both in the same frame and sharing the screen space. What is Split Frame Rendering

The first case is the easiest to do and does not require synchronization between the two GPUs when rendering the same frame since each of them handles a different frame. The handicap is that assets such as textures, models and others will be duplicated in the RAM of each of the cards since, although they are working in a combined way, one works alternately to the other.

The AFR is the most used model in the case of PC graphics cards that work in dual mode. The main advantage is that this allows the GPU to alternate starting its frame before its partner finishes it and the CPU can create the command list for the second GPU just before the first one finishes its work. It must be taken into account that in the specific case of AFR not all frames are rendered at the same time and at certain times. One GPU will have a greater load than the other and they will not be coordinated, so it is necessary an element that coordinates when a GPU begins the frame and when it ends, so that when generating the image one does not step on the other .

The second case is typical of GPUs for Post-PC devices, since the GPUs of these devices are usually made up of several symmetrical GPUs operating in parallel and sharing the same memory access. The type of rendering traditionally used in these devices is rendering by Tiles or Mosaics, based on dividing the screen into mosaics. In this way of rendering, a first division of the screen is made by the number of available GPU cores. Each GPU core then treats a portion of the screen as if it were a whole frame.

Split Frame Rendering

This method is called Split Frame Rendering, SFR, and it works especially in systems where despite having several GPUs all of them share the same memory well. On PC and starting with DirectX 12, Microsoft added support for Split Frame Rendering that allowed two graphics cards to handle the same frame at the same time sharing the same memory addressing. This looks really nice on paper but… What happens when two GPUs don’t share the same RAM memory well and are rendering the same frame at the same time? Horribly high access latencies are created for communication when a GPU needs to access data that is on the common address of both GPUs.

The SFR to function properly requires that both GPUs have the same memory pool in a shared way and not just the same addressing. For some time now, command processors with virtualization have appeared in some GPU models that what they do is function as 2 or more GPUs and divide the available hardware resources to be able to function as several different GPUs. This is widely used in Data Centers where a GPU can be used to provide resources to different clients at the same time.

But in the domestic PC market, both the SFR and the AFR are not used because it requires adapting the way of creating lists of software commands for this type of configuration. The number of people with a Dual GPU is extremely low and it does not pay at all to develop software that takes advantage of these features. Even the Virtual Reality market where having a GPU rendering each eye would be a plus does not even achieve a large enough demand for graphics cards that have command processors that support virtualization as standard.

Has DirectX 12 Affected Dual GPUs?

Starting with DirectX 11, the ability to do general-purpose computing on GPUs was added in parallel with graphics rendering. The way to do it is not to have a single buffer in memory but several different buffers, one of them and the main one being in charge of rendering the graphics and the rest doing small computing tasks.

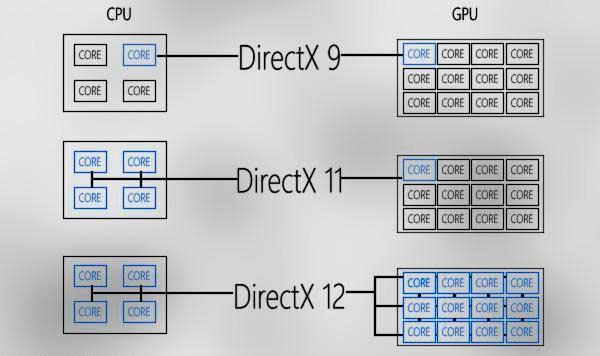

In DirectX 11, regarding the GPU, although there are several command lists apart from the command list for graphics in the system RAM internal to the GPU, everything was executed as a long list where the rendering of the scene has preference. With DirectX 12 there was a big change, where the compute lists are executed by the GPU in parallel and asynchronously. This means that these command lists are resolved regardless of the status of the command list for rendering graphics.

Keep in mind that both the SFR and the AFR depend on the availability status of the GPU resources if the frame has finished or not. A programmer can scratch a few extra milliseconds from a GPU to perform a number of additional tasks outside of scene composition. This makes it very, very difficult to get to coordinate two GPUs in a coordinated way in order to allow both the AFR and the SFR, thus, DirectX 12 has been the final nail in the coffin of dual GPUs in the home market.

These changes, together with the few people with dual GPU configurations that justify optimizing games, have made them completely disappear from the market.