It’s no secret that AMD is going to get a lot of mileage out of its vertical cache technology in the future. We have already seen it in the EPYC Milan-X processors for servers and in the Ryzen 7 5800X3D for PCs. However, the plans could go further and we could be talking about an eventual L4 Cache and AI in future AMD Ryzen.

The cache memory of a processor has a clear function, to speed up the execution of instructions. However, this may not be slower in fetching the data than RAM. So the point is reached where adding additional cache levels is counterproductive and does not lead to better performance. The reason for this is that the levels are not continuous and an additional access has to be made for each one of the levels until reaching the system memory.

However, the arrival of DDR5 memory and its higher access latency open a window of opportunity for one more level in the hierarchy. Which in the case of desktop AMD Ryzen would not be located in CCD Chiplets, but much further. Specifically in the integrated memory controller. What is an L4 cache in AMD processors. Both EPYC, Threadripper and Ryzen.

How do we know that future AMD CPUs will have an L4 cache?

The answer to the question is simple, neither more nor less than a patent. And it is that AMD intends to respond to one of the capacities that Intel has at the moment. We are referring to the XMX units within the Intel Core 12 and Sapphire Rapids based Xeons. That is, the unit of the systolic array type or tensor unit to calculate arrays. In other words: to speed up artificial intelligence algorithms so that they run more smoothly on the processor.

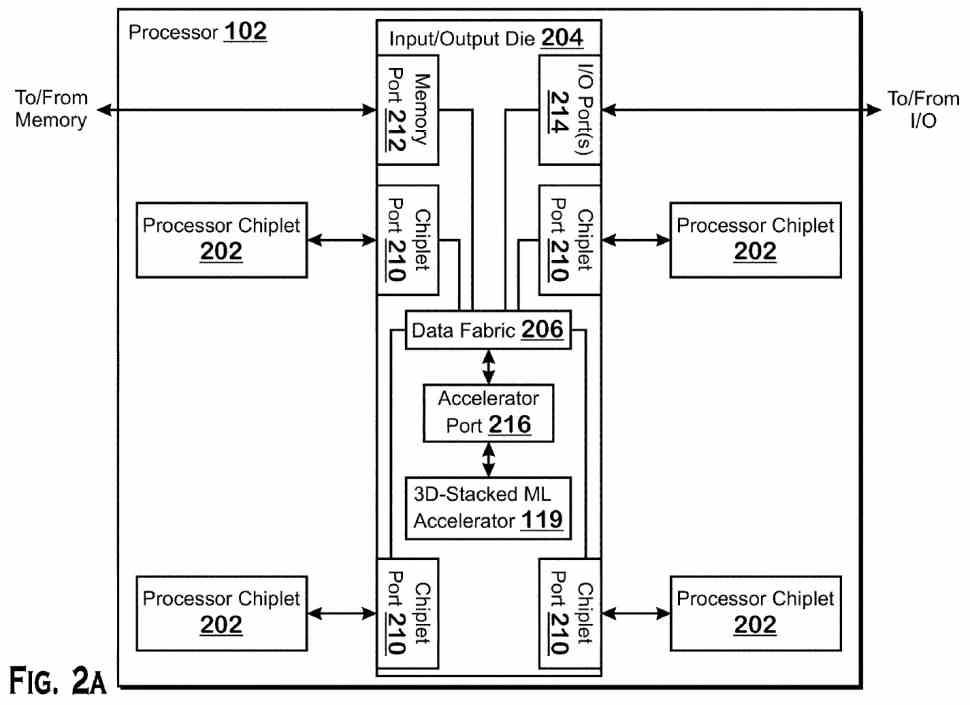

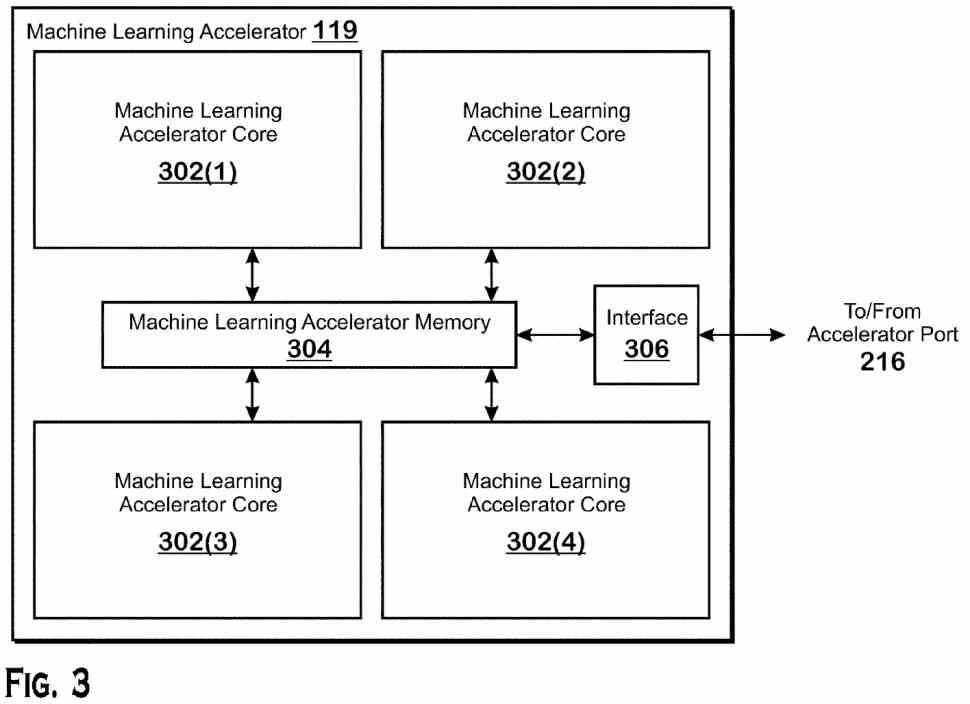

To do this, AMD has created a new IOD unit, which stands out for sacrificing some of the ports for the different processors in exchange for integrating a unit to accelerate Machine Learning algorithms. So we are facing AMD’s response to the inclusion of the XMX unit in the latest processors from its main rival. The grace of this is that while Intel has chosen that some of its Xeon Sapphire Rapids use HBM memory. In the case of AMD, the solution is simpler. The use of the same V-Cache as on top of the processor for AI to speed up said algorithms . Although in this case in the form of L4 cache . A level hitherto unheard of in processors with Zen architecture.

At the moment this is still a patent, but this is likely to result in a new CPU variant . In any case, it is a market in which AMD has shown the least interest of all. Although rumors claim that said unit could actually be an embedded FPGA or eFPGA from Xilinx . About this we will leave doubts when the time comes.