Thanks to the cloud, practically all known antivirus will be able to protect us from more than 99.9% of the threats that are hidden on the Internet. Even thanks to their behavioral and heuristic analysis systems, these programs are capable of detecting and blocking viruses that have not yet been registered in the databases. However, not all of them do it with the same precision. And this is why “false positives” can become a problem.

Although antiviruses share their databases, the algorithms are private. Some have very complex algorithms capable of precisely and efficiently protecting us from unknown threats. However, others opt for less refined algorithms, which, to detect these threats, have to increase their margin of error, leading to these false positives.

As its name suggests, a false positive is a file or a reliable and secure website that the antivirus detects as a threat and blocks it. Although the antivirus fulfills the objective of protecting us as well, doing it with a very high rate of false positives can be dangerous and, therefore, not recommended.

Security companies do not usually give data on the efficiency of their engine, and the error rate of their algorithms (obviously). However, thanks to platforms like AV Comparatives we can get an idea of how these security programs protect us. And if what we are looking for is a safe and reliable antivirus, that does not give us problems with false positives, these are the ones we must avoid.

If you don’t want false positives, avoid AVG, Panda, Avast, and Malwarebytes

The AV Comparatives platform is in charge of testing the main antivirus on the market so that users can know how they work and can choose which is the best security program for their PC. All antiviruses are tested on identical computers and environments, with the same software and the same settings. In this way, the data is as real and comparative as possible.

This platform tests antivirus in two different environments. The first one is in the laboratory . They use their own collection of viruses and threats and check how many of them are capable of detecting these threats. This is where most get the best detection rates and a very low number of false positives, as the algorithms are optimized for this purpose. However, in real world tests, things change.

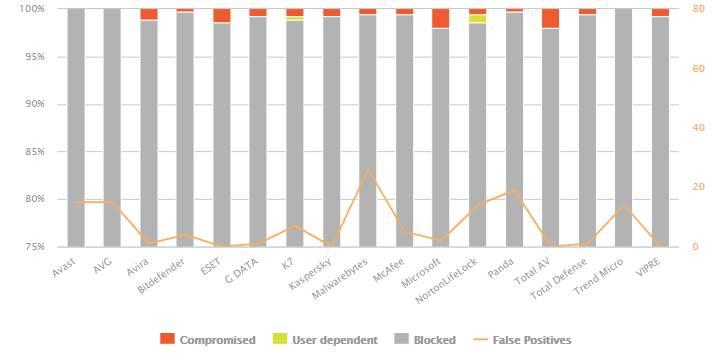

The AV Comparatives Real World Protection Test is the most complete and complex test that we can find to measure the security of antivirus. These tests are carried out by visiting dangerous websites that are online. And also by downloading files that, clearly, are threats (for example, what arrives by mail to the SPAM folder). The latest Real World tests, which we can consult here , have been carried out throughout February and March 2021. And, as we can see, it shows much more real values on the protection of these antivirus.

From the previous graph we are struck by the large number of false positives that some antivirus have. For example, Avast and AVG are two of the antivirus programs that have generated the most false positives, with a total of 15. Only Panda antivirus has been ahead of these two with 19 false positives. And also Malwarebytes , with 26. Although we do not take into account the latter as it is focused on a different type of protection.

Other antivirus that have had a significant number of false positives have been K7 , McAfee , Norton , and Trend Micro , all of them above 5 false detections.

Do false positives make them bad antivirus?

Actually, it doesn’t have to. Moreover, if we look at the graph we can see that the antivirus programs that have the most false positives are generally the ones that offer the highest protection ratio . It is not optimal, since in the end, distrusting by default is similar to protecting ourselves from viruses by turning off and unplugging the computer. But it helps us avoid threats.

Antiviruses with a lower rate of false positives, such as Windows Defender , Avira , Kaspersky or Eset , have fallen by up to 2% of all threats that have been analyzed. And all this for having much less strict algorithms. By not showing a false positive, they have decided to trust a file, putting users at risk.