Presently, graphics cards designed for home use are equipped with GDDR6 and GDDR6X memory modules, selected for their optimal blend of performance and cost-effectiveness. However, it’s worth noting that these are not the exclusive memory options available for graphics cards, as demonstrated by products like the AMD Radeon Vega series. Notably, SK Hynix has unveiled a significant advancement in the realm of graphics card memory with the introduction of HBM3E modules.

HBM (High Bandwidth Memory) technology might not be familiar to all, especially those primarily interested in gaming graphics. HBM memories stand out by providing notably higher data transfer rates compared to the more common GDDR memories.

This enhanced data transfer capability translates to improved performance in specific use cases. However, it’s important to acknowledge several drawbacks associated with HBM technology. Foremost among these is the considerable cost factor, with HBM modules being notably more expensive—sometimes even up to 20 times more costly than GDDR counterparts. Furthermore, these modules are positioned alongside the GPU in the same socket, leading to surface irregularities and potential challenges in heat dissipation.

Introducing the Upcoming Wave of Advanced Broadband Memory Technology

SK Hynix has taken the wraps off its latest achievement: the production readiness of its pioneering HBM3E modules, with samples already dispatched to various clients. These novel chips introduce a remarkable leap in data transfer velocity, boasting an impressive speed of 9 GT/s. This performance surge equates to a notable 40% improvement over the prior HBM3 iteration.

The full-scale manufacturing of these innovative memory components is scheduled to commence in the first half of the coming year. Notably absent from the current disclosure is information regarding the specific capacities of these new memory chips, nor is there precise data outlining their availability to assembly partners.

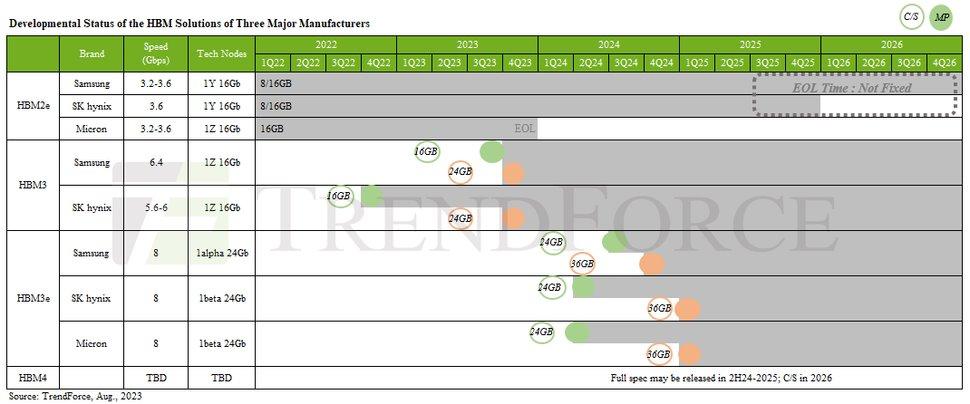

Market research entity TrendForce has contributed their insights, offering numerical projections. According to their analysis, SK Hynix intends to produce 24 GB HBM3E chips in the initial quarter of 2024. Further down the timeline, plans extend to the creation of 36 GB memory solutions during the opening quarter of 2025.

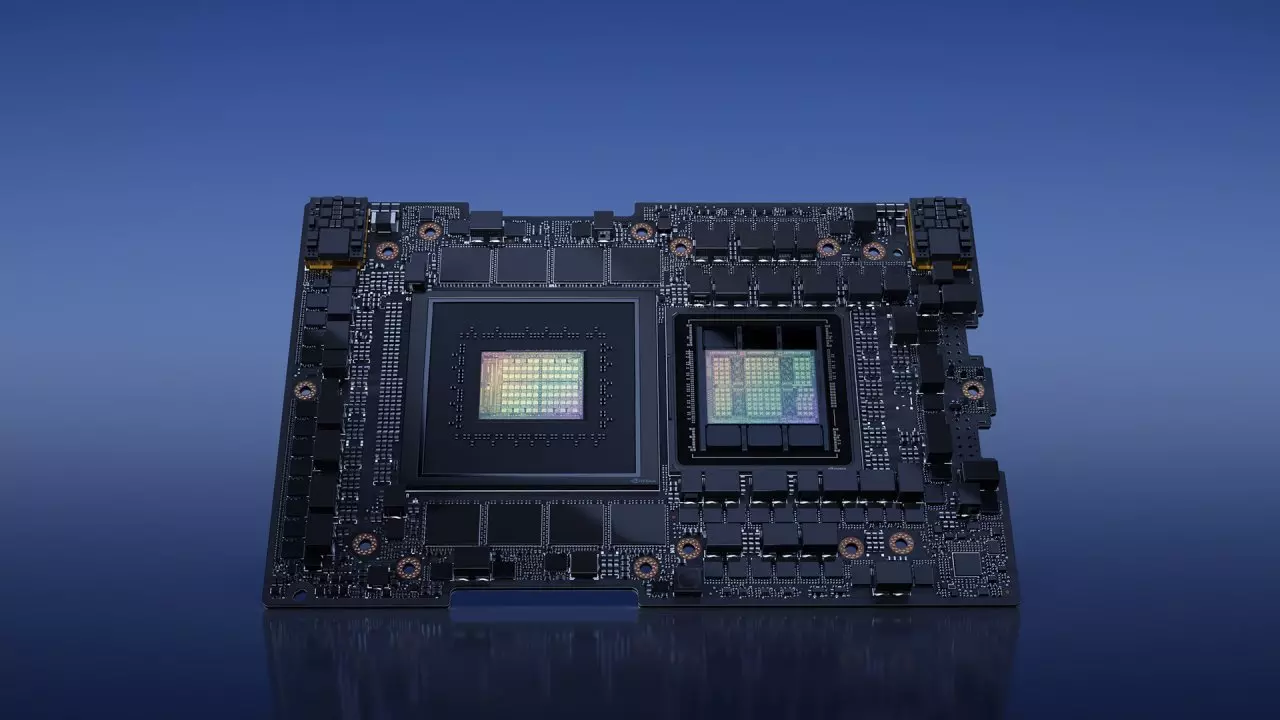

This foresight from TrendForce aligns intriguingly with ongoing market trends. Anticipations are circulating regarding NVIDIA‘s slated initiation of Grace Hooper GH200 solutions, targeted at AI and advanced computing tasks, set for Q2 2024. Speculation suggests that these GH200 solutions might incorporate a substantial 141 GB of SK Hynix HBM3E memory, though this remains speculative in nature.

It’s important to note that SK Hynix’s HBM3E memories, while impressive, do not currently claim the apex in the memory market. This new offering boasts a bandwidth of 9 GT/s, slightly behind Micron’s memory units operating at 9.2 GT/s. Micron’s solutions deliver a robust bandwidth of 1.2 TB/s per stack, whereas SK Hynix’s offerings achieve 1.15 TB/s, underscoring the ongoing competitive dynamics.

Built for AI

What adds an intriguing twist to the situation is SK Hynix’s decision to remain discreet about the exact specifications of these new memory modules. However, they have chosen to emphasize their utilization of Advanced Mass Reflow Molded Underfill (MR-RUF) technology. This innovation plays a pivotal role in narrowing the gaps between components within an HBM stack, simultaneously delivering a 10% enhancement in heat dissipation efficiency. Impressively, this technology also manages to maintain the same Z height for a 12 Hi HBM2 configuration as an 8 Hi HBM module.

An element of curiosity surrounding the HBM3E standard arises from its current lack of validation by JEDEC, the established regulatory body responsible for certifying and validating memory chips. It’s worth recalling that adherence to JEDEC standards is customary for memory components.

The accelerated pace of developments could potentially be attributed to market demands, particularly the surge in mass production for AI and high-performance computing applications. In this context, it’s conceivable that mass production is commencing prior to receiving the official certification, marking a possible first-time occurrence of such a scenario. This could signify a departure from the conventional sequence where mass production usually follows after regulatory validation.