When it comes to measuring the performance of RAM and VRAM, we usually talk about two performance parameters, which are bandwidth and latency. But what is the relationship between these two characteristics and can we classify them as constant?

One of the problems with technical specifications is that they tend to give the data running at 100% performance in perfect conditions. In the case of memory, this does not happen, since not all data is at the same latency and the bandwidth is never 100%.

More bandwidth doesn’t mean less latency

We understand as latency between a processing unit and its associated memory, the time it takes to receive a requested information or to receive the signal that a change has been made in the memory. So latency really is a way of measuring time.

The bandwidth is instead the amount of data that is transmitted in each second, so it is a rate of speed. So by direct logic we can come to think that at a higher speed when we are looking for a data, then in less time the CPU, GPU or any other processing unit will get the data.

The reality is that this is not the case, moreover, there is the peculiarity that the more bandwidth a memory has, then it usually has more latency compared to others. This phenomenon has an explanation, which is what we are going to explain to you in the following sections of this article.

Searching for data adds latency

Almost all processing units today have a hierarchy of caches, in which the processor will ask each of them first before accessing RAM. This is because the direct latency between the processor and RAM is large enough to result in a loss of performance over the ideal processor.

Imagine that you are looking for a specific product, the first thing you do is look in the local store, then in a slightly larger store and finally in a department store. the visit to each establishment is not done immediately, but requires travel time. The same thing happens in the cache hierarchy, this is called a “cache miss” so we can summarize the time as follows:

Search time = Search time in the first cache + cache miss period +… search time in the last cache.

If the cache lookup time is longer than the time it takes to go to main RAM, then the cache system will be poorly designed on a processor as it defies the purpose for which the cache would have been created.

Now, the latency problem is more complex, since to the access time added by the cache search we have to add the latency that is added to search the data in RAM if it is not found in RAM. What problems can we find ourselves with? Well, for example, all memory channels are occupied and contention is created, which occurs when RAM has occupied memory channels and is receiving or delivering other data.

How does latency affect bandwidth?

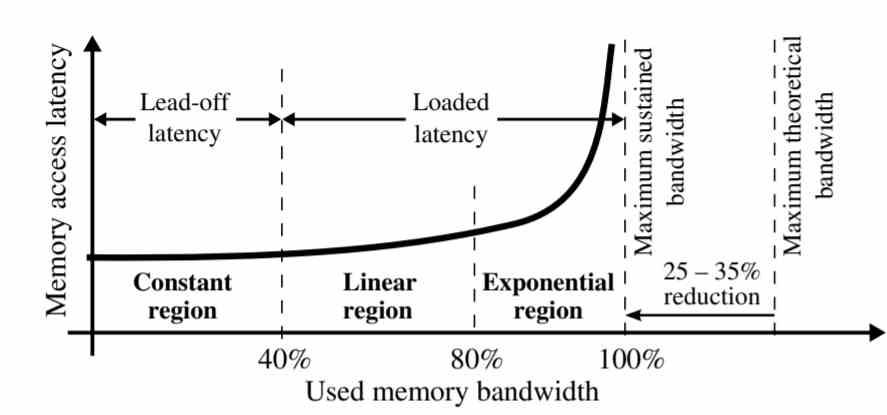

As seen in the graph, latency is not the same for all memory bandwidth.

- Constant region: Latency remains constant at 40% of sustained bandwidth.

- Linear region: Between 40 and 80% of the sustained bandwidth, latency increases linearly. This occurs due to the fact that there is an oversaturation of requests to memory that have accumulated at the end due to contention.

- Exponential region: In the last 20% of the bandwidth section, data latency grows exponentially, all memory requests that could not be resolved in the previous period accumulate in this part, creating contentions between them.

This phenomenon has a very simple explanation, the first requests to memory that are answered are those that are found first, most of them are in the cache when this has a copy, but those that are not in the cache accumulate . One of the differences between caches and RAM is that the former can support several simultaneous accesses, but when the search for data occurs in RAM then the latency is much higher.

We tend to imagine RAM as a kind of torrent of water in which data does not stop circulating at the speed that is specified, when RAM is not really going to move data unless it has a request to it. In other words, latency affects throughput and therefore bandwidth.

Ways to reduce latency

Once we know that contention in access to data creates latency and this affects bandwidth, we have to think about solutions. The clearest of it is the fact of increasing the number of memory channels with RAM, precisely this is one of the keys by which HBM memory has lower access latency than GDDR6, since 8 memory channels allow a less contention than with 2 channels of GDDR6.

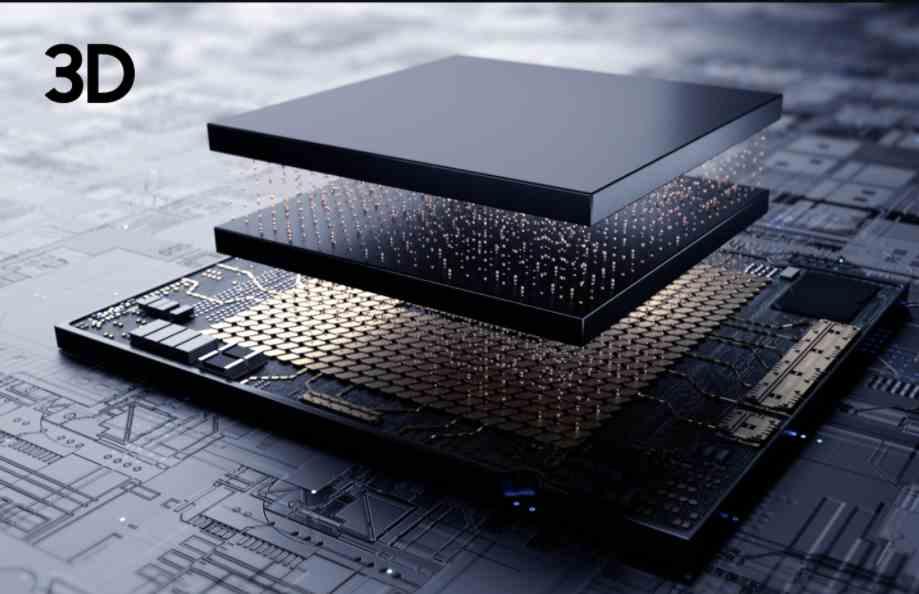

The best way to reduce latency would be to create memory as close to the processor as a cache, but it is impossible to create RAM with enough storage capacity to be fully functional. We can place a memory chip and connect it via TSV, but since the memory is so close to avoid thermal drowning and with it the effective bandwidth.

In this case, since latency affects bandwidth, due to the proximity between memory and processor, then the effect of latency on memory would be much lower. The trade-off of implementing a CPU or GPU with 3DIC? It would double the costs of the PC and the more complex manufacturing process would cause fewer units to reach us, ergo more scarcity and therefore even more expensive prices.