Graphics chips or known today as GPUs have had an interesting evolution over time from their beginnings in the first PCs to reaching the ultra-complex behemoths that we have today. That is why we are going to review its evolution through time in this article.

Graphics cards or known as GPUs today are the most widely used component of a PC that is not part of the Von Neumann design. Which has led to the spectacular evolution of GPUs to this day.

What is a GPU?

A graphics card is a key component of a computer that uses it to communicate with the user through a screen, on which it generates images that report the current situation of the computer at all times. The main components of a graphics card are the graphics chip on the one hand and the video memory or VRAM.

This has been the case since the launch of the first graphics cards until today, graphics chips and VRAMs have become more complex and powerful, but the standard configuration is still maintained.

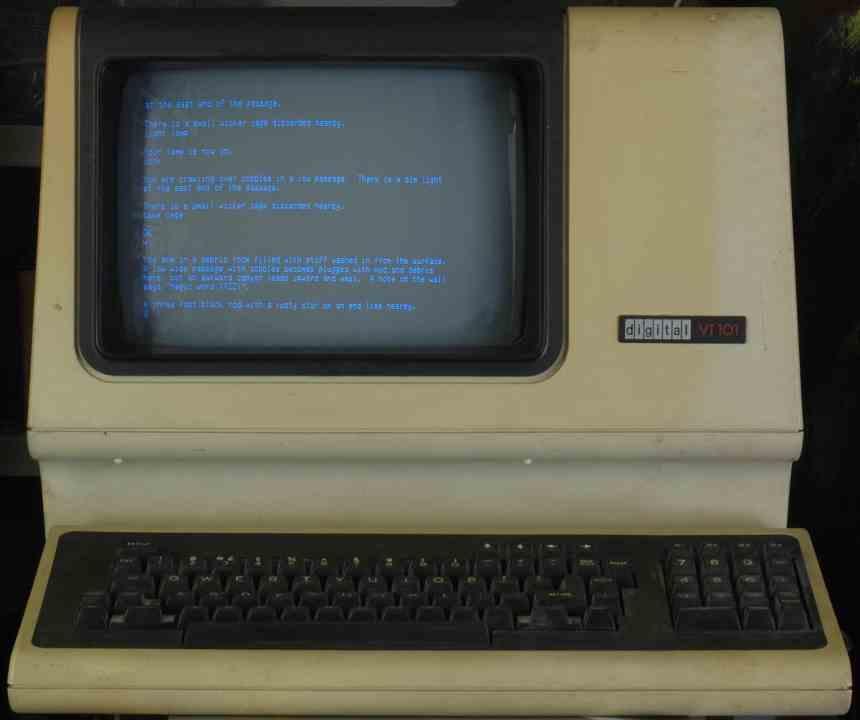

First generation of graphics cards: terminals and image buffers

The first generation is the one that came from the text terminals, where a television without a radio frequency receiver was used to transmit the text that was written. The name given to these terminals was TV Typewriters or typewriters on television, since for a person of those times it was that.

Its operation was very simple, a part of the RAM was used to store the sequence of characters that we were writing. Each character had its glyph in the form of a bitmap stored in a ROM and instead of storing them in RAM, what RAM did was inform the ROM which character had to be written in the video output. For this, a series of binary counters were used that counted the vertical and horizontal scanning times.

But in the same way that you can choose characters, you can choose colors, so some of these primitive graphics chips could draw images on the screen, but limited by the available memory and the color palette, which evolved over time to show more and more definition in a larger number of pixels, however they were very primitive elements with which you could hardly do anything.

Second evolution of GPUs: sprites

Unfortunately the second generation did not reach the PC, since at the beginning of the 80 IBM had not considered these to run video games. The concept of the second generation? The moving targets or sprites, these are glyphs or bitmaps that can be placed in any position in the image.

At the beginning of the 80s, most of the 8-bit systems that people had in their homes made use of a sprite system, since most of their software is video games. Sprite systems use attribute tables for each sprite that indicate the priority, if it is flipped, the color palette they use and their position.

This system was used in 8-bit and 16-bit consoles for almost 15 years before making the jump to 32-bit. On the PC, on the other hand, it was totally unpublished, which completely influenced the games that came out for the platform for many years.

Third Evolution of GPUs: Display Lists and the Blitter

Screen lists first appeared on home hardware through the Atari 800 computer’s ANTIC. A screen list is nothing more than a series of commands for how the graphics chip is to draw the scene. So far what had been done was to copy a list of data into video memory and have the hardware interpret it.

But where they had their apogee was in the Commodore Amiga, through its Agnus graphics chip that had an evolution of the ANTIC called Copper and a chip called Blitter, which completely revolutionized the operation. The function of the Blitter? Copying data from one memory to another but being able to modify these on the fly in such a way that the origin was different from the destination and all this freeing up the CPU.

Before the appearance of the Blitter, the CPUs only had for them the time in which the screen was not drawn, with the Blitter a CPU could have all the time in the world and its ability to manipulate graphic data on the fly gave a quality leap in PCs.

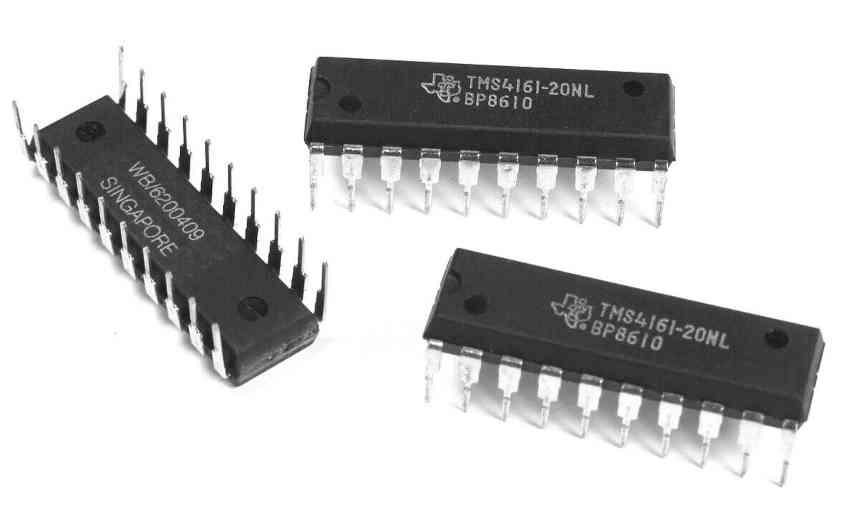

Fourth evolution of GPUs: multiport memory

Processed By eBay with ImageMagick, z1.1.0. || B2

Although the CPU was completely free, the graphics chips had the problem that they could not access the RAM to manipulate the data if the screen was being generated. So the next evolution was the use of multiport memory. Which translates into multi-channel memory. This means that the display adapter and the graphics chip can be accessed at the same time without one having to wait for the other.

With this we come to the evolution of 2D cards, from that point on, their evolution was not architectural but in specifications, with the ability to draw more pixels per screen and with greater color precision.

The emergence of 3D hardware on PC

The first 3D cards did not have the 2D elements but two completely new hardware elements in 2D hardware, which were the following:

- Texture handling units: which can take a specific part of an image stored in memory and manipulate it. A texture unit is capable of taking that specific part of an image and rotating it, resizing it and even adjusting it to be placed on a 3D surface. Texture units were the last to appear on 3D workstations, but instead on PCs in the face of the adoption of 3D graphics it was the first piece of hardware to be adopted.

- Raster units: Raster units are responsible for converting vector-based 3D data into 2D Cartesian data that can be displayed on the screen. This process was highly burdensome for the CPUs of the time and its inclusion allowed 3D software to reach homes and it was not necessary to use workstations.

The first 3D cards were an extra cost and did not have the expected success despite their adoption in the PC video game market, the reason was that they asked for a separate 2D card, but it did not take long for the two parts of the hardware to be integrated into one .

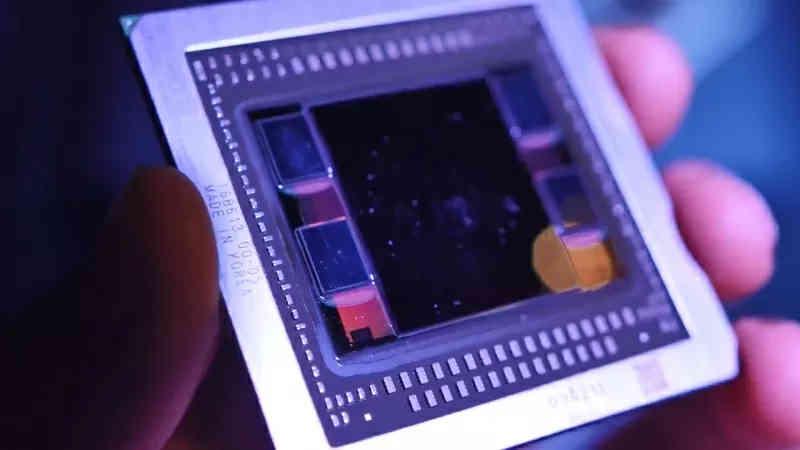

The GPU is completed to its final form

The NVIDIA GeForce 256 was the first GPU to have the full 3D pipeline, this meant that the entire 3D scene was calculated by the GPU and the CPU only had to create the display list. It was an important change from which the rest of the evolutions have taken place since then, which in summary have been the following:

- Shader units: these are processors based on multithreaded execution, which allows them to execute programs with which they first modified graphical primitives and, over time, more generic data. Its evolution led graphics to scientific computing and initially they were separated by the type of graphic primitive, but then they were unified and continue like this ever since.

- Accelerators: Video codecs, display adapters, and other hardware were integrated into GPUs during this period. Ceasing to be chips apart.

- Systolic arrays for the execution of artificial intelligence algorithms.

- Units to speed up the execution of ray tracing.

Although in the last twenty years we have seen generation after generation of GPUs, really at the architectural level if we discard the addition of new units for AI and Ray Tracing, what we have really seen is an evolution in which there are more and more units but with a common architecture.