Three-dimensional graphics have been with us for two decades and with it also the GPUs in charge of generating them and sending them to the screen of our monitors. Today they are extremely complex pieces that are made up of various elements, some new, others have been inside for years. The one we will cover in this article is the Geometry Engine.

As real-time graphics advance, so do the GPUs and with it new problems appear to be solved or old ones are magnified. This means that old solutions are no longer efficient and new ones have to be created. The new generation of graphics will not only be based on Ray Tracing, but on a considerable increase in the geometry of the scene. This is where the Geometry Engine comes in, an essential piece to improve GPU efficiency in this new paradigm.

A bit of history to clear up confusion

Historically, the Geometry Engine or geometry engine referred to the hardware in charge of performing the manipulation processes of the scene geometry that occur before rasterization. That is, before the triangles that make up the three-dimensional scene have been converted into pixel fragments.

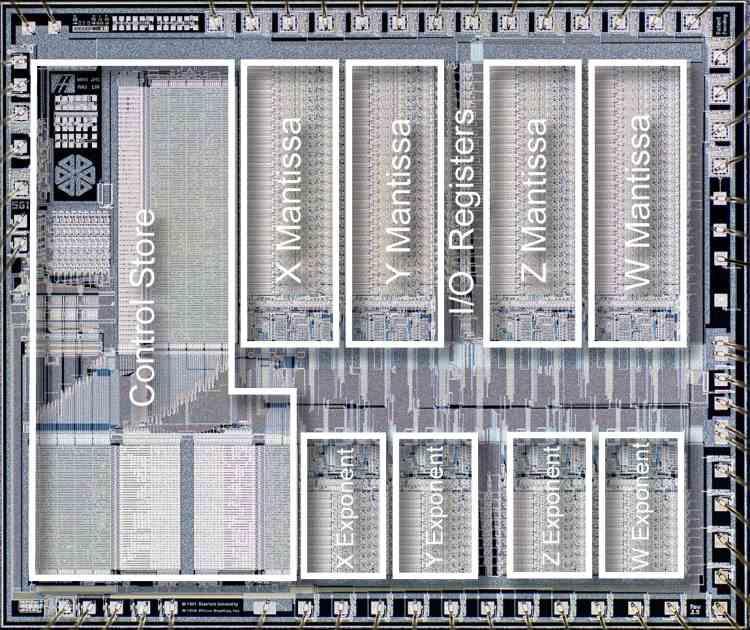

The first Geometry Engine was the brainchild of Jim Clark in the early 1980s, and was the beginning of the legendary Silicon Graphics. Thanks to this innovation, it was possible to move 3D scene manipulation from minicomputers to workstations that were a fraction of the size and cost of the former.

It wasn’t until the late 90s that 3D games started to become popular, but the first 3D cards lacked a Geometry Engine and relied on CPU power to calculate scene geometry. It wasn’t until the PC launch of the first NVIDIA GeForce that the GE was fully integrated into a graphics chip. Being dubbed T&L by NVIDIA marketing.

Over the years and the arrival of Shaders, the Geometry Engine as a piece of hardware has been completely replaced and now it is the Shader Units who take care of it. This change occurred from DirectX 10, when the Geometry Engine as hardware disappeared from the NVIDIA and AMD GPUs, although recently its significance has been taken by a new type of unit included in the most advanced architectures and about it is what we will talk about in this article.

The Primitive Assembler

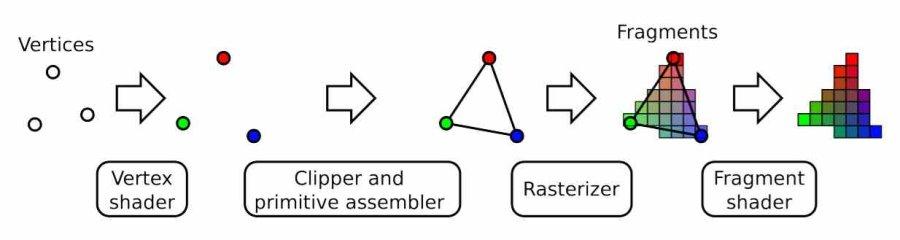

Elements in a three-dimensional game scene are rendered using triangles to compose the different elements of the scene, which are made up of vertices. Now, the first stage of the graphical pipeline after the command processor has read the screen list is the Vertex Shader and its name is due to the fact that what it does is work with vertices and it does not do it with primitives or triangles so Independent.

It is a unit called Primitive Assembler that is in charge of joining these vertices, not only to create triangles, but to reconstruct the different models that make up the scene by joining their different vertices together. The Primitive Assembler is a fixed function piece in all GPUs that perform this function automatically without the need for code participation in the screen list. Plain and simple, everything that runs on the Vertex Shader stage within the 3D pipeline is sent to the Primitive Assembler and from there to the next process in the stage.

In the event that there is a tessellation unit in the GPU, which is responsible for subdividing the vertices, the Primitive Assembler runs just after the tessellation process, since the new configuration of the vertices requires union calculations other than the vertices that will make up the different elements of the scene.

The Clipper and the removal of superfluous geometry

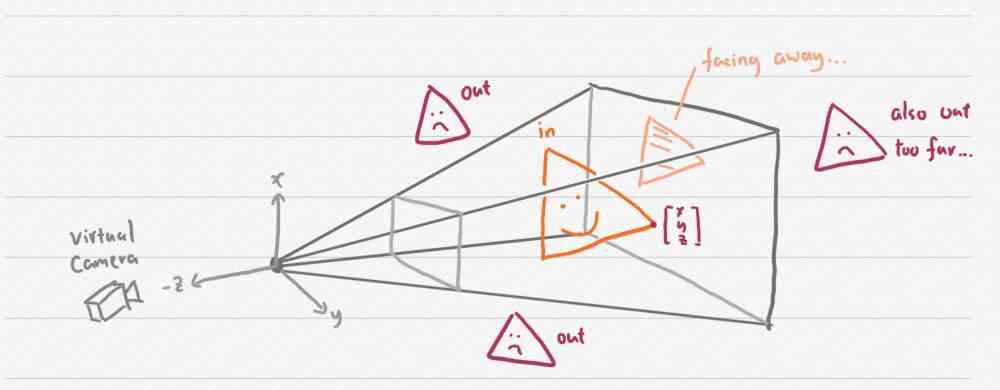

In a three-dimensional scene there are a lot of graphical primitives or triangles in the scene. However, not all of them are visible to the player in each frame and therefore it is necessary to discard in each frame all the non-visible geometry before the rasterization stage. Since every vertex that is not seen, but that is not discarded is one that will be later rasterized, textured and even if later it is discarded because there is another pixel with higher priority when drawing.

The reason? Keep in mind that within the traditional 3D pipeline, a Vertex Shader is executed for each vertex in the scene, but a Pixel Shader for each vertex and the number of pixels per triangle is usually an order of magnitude greater. This is why it is important to rule out geometry that is not visible in the scene. For this, two algorithms are used:

- The first detects the vertices outside the player’s field of vision and is called View Frustum Culling.

- The second instead eliminates those that are within the field of vision, but that are hidden by other larger and closer elements and is called Back Face Culling.

Now, the Culling process can also be carried out through a fixed function and what it does is completely eliminate the triangles and even non-visible objects. But what happens when an object is partially visible? Well, depending on how the hardware is implemented, it does not eliminate it or eliminate the object completely.

What is the Geometry Engine in a current GPU?

Once the problems in the Primitive Assembler and Clipper have been explained, we have to talk about what the Geometry Engine is, which is nothing more than a new piece of hardware that brings together the same capabilities as the Primitive Assembler and the Clipper, but with a Greater precision. Since it has the ability to eliminate superfluous geometry but at the vertex level. This means that if an object is partially off-screen or not totally hidden, what it does is remove the non-visible vertices and rebuild only the visible part.

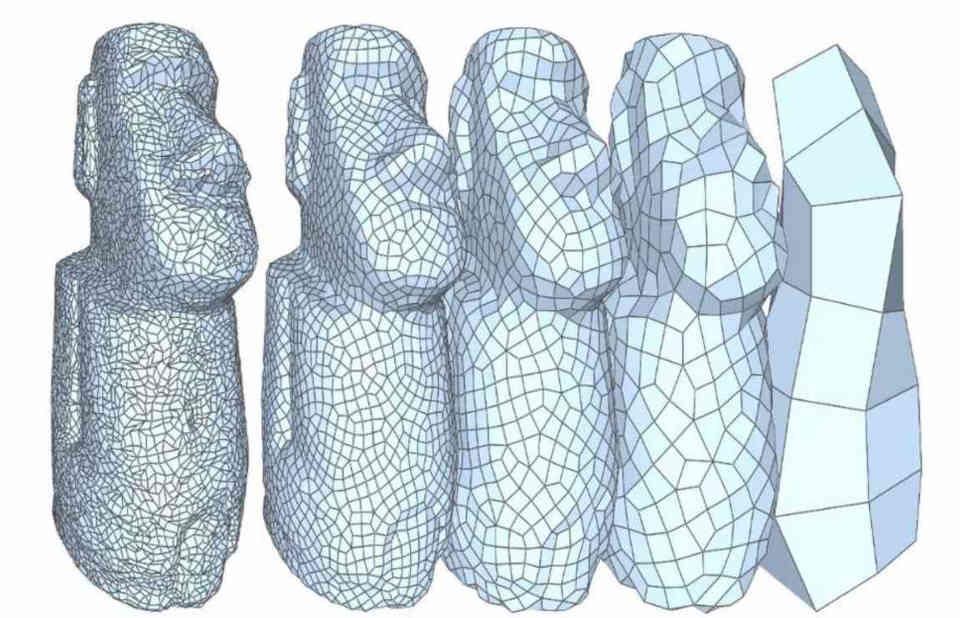

The other utility of the Geometry Engine has to do with the creation in the level of detail of the objects in the scene, where these have different levels of details, Levels of Detail or LOD in English, according to the distance. The Geometry Engine has the ability to intelligently remove non-visible vertices based on distance and thus retain the original shape of the object. Think of it as a tessellation in reverse, where instead of increasing the number of vertices to generate detail, what you do is decrease them.

This is also important in the face of the over-tessellation problem, it must be taken into account that when the vertices are tessellated, the GPU does not have proof of their real position in the frame space, so a problem ends up occurring which is the over-tessellation. Which is that the GPU calculates more vertices in objects in the distance that are not seen, but whose data is there. The Geometry Engine having access to the information of the geometry of the scene can easily eliminate them. Let’s not forget that the traditional Clipper only removes what is out of the picture or hidden.

The Geometry Engine in the new DX12 Ultimate pipeline

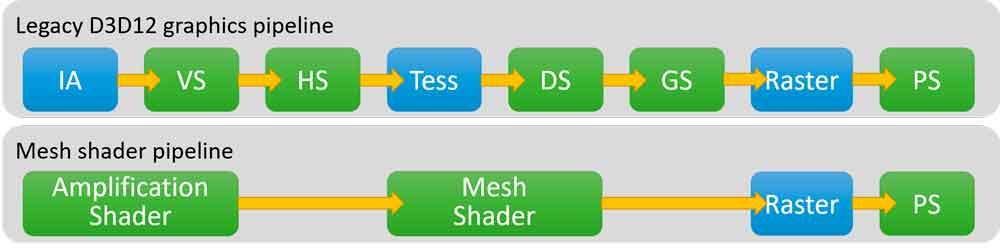

With DirectX 12 Ultimate, the part of the 3D pipeline before rasterization changes completely, since a good part of the functions go from being carried out through a fixed function to doing it in the Shader units of the GPU. For example, the tessellation will reach a point that will cease to be carried out in a fixed function unit to pass to said units and therefore said unit will disappear.

In the case of the Geometry Engine this will not disappear, but it is the only one that has access to the information on how the vertices are connected to each other. Any algorithm that involves accessing the list of scene geometry means having to go through the Geometry Engine, which is the one that controls the list of vertices and their relationships with each other.

Looking ahead, one of the things that we are going to see will be the creation of BVH structures for Ray Tracing from the GPU, the piece of hardware that will evolve for this task is the Geometry Engine. Which today has access to the L2 cache of the GPU, where the information of the vertices is stored temporarily. The amount of memory inside the GPU is expected to increase considerably in the following years.