Currently there are two different markets that graphics cards are aimed at. The most popular is gaming where tens of millions of PC video game fans use them to play their games with the best possible frame rate and / or the best graphics. The other market is for high-performance computing or HPC GPUs. How are the two types of graphs different?

There is no doubt that graphics cards have undergone a huge evolution in recent years. Not only do they offer the ability to reproduce increasingly realistic graphics, but their computing power is also used for scientific applications of all kinds and this has allowed them to reach more markets than video games. But in different markets different solutions and as is already known, both NVIDIA and AMD have architectures designed for what is the reproduction of video games on the one hand and on the other hand for scientific computing.

At a general level they share a series of common elements, but they bring with them a series of differential elements that makes them different creatures if we compare an HPC GPU architecture with a Gaming GPU. That is why we have decided to tell you about the differences between both types of GPU.

Data accuracy, the main difference in GPU HPC and Gaming

In a video game, the fact that a piece of data is not entirely accurate is not a problem, since one usually works with what we mathematically call an approximation. Which consists of using lower precision figures, but with enough to perform a close simulation. This is due to the fact that the amount of bits that we can assign to the data in the ALUs are limited and more bits per ALU, more transistors and with this, the size of the transistors is greater. Since the transistor budget of a commercial GPU is limited and data accuracy is not that important, this sacrifice ends up being made.

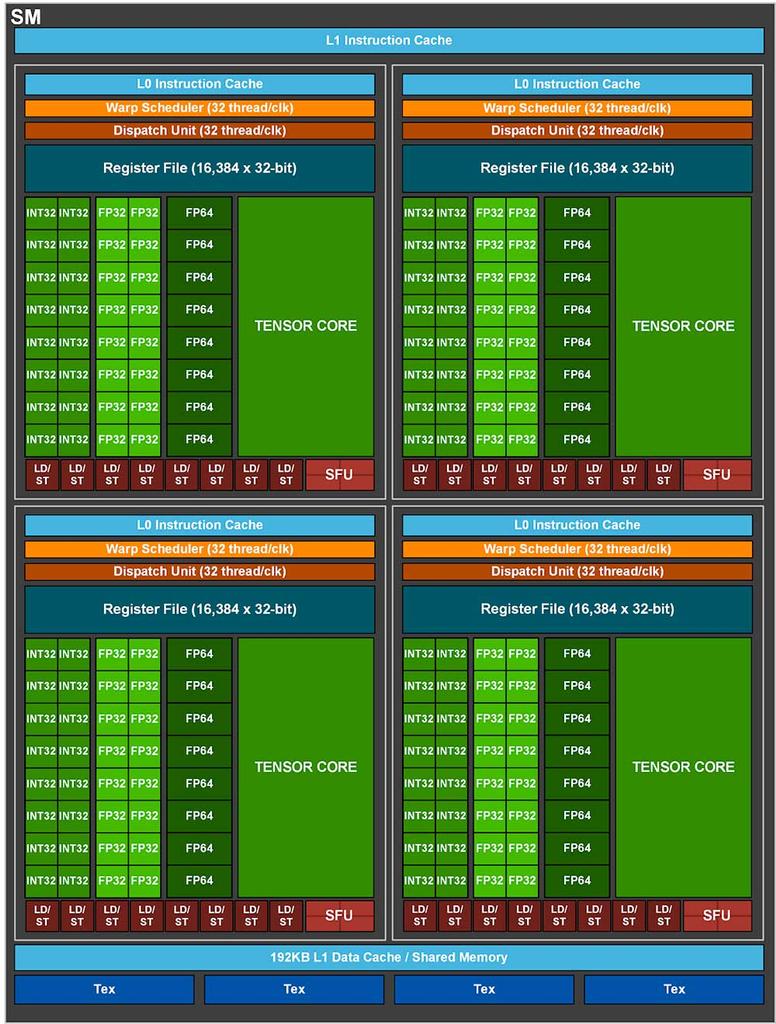

But in scientific computing it is different, the precision of the data is very important to perform the calculations. A simple variation in the data can lead to the model you are working with to deviate too much or to erroneous results. Imagine the disaster that would be if a low-precision GPU will be used to calculate certain elements when creating a new drug and that due to lack of precision, an error ends up resulting in a health disaster. Hence, many of the GPUs designed for HPC make use of precision 64-bit floating point ALUs. Which we do not usually see the GPUs used for gaming for a very obvious fact.

The use of higher precision instructions requires the implementation of specialized ALUs and with it changes in the entire shader unit, not only with regard to the implementation of these ALUs, but also with regard to registers, the instruction set and intercommunication.

The cost structure is different on an HPC GPU

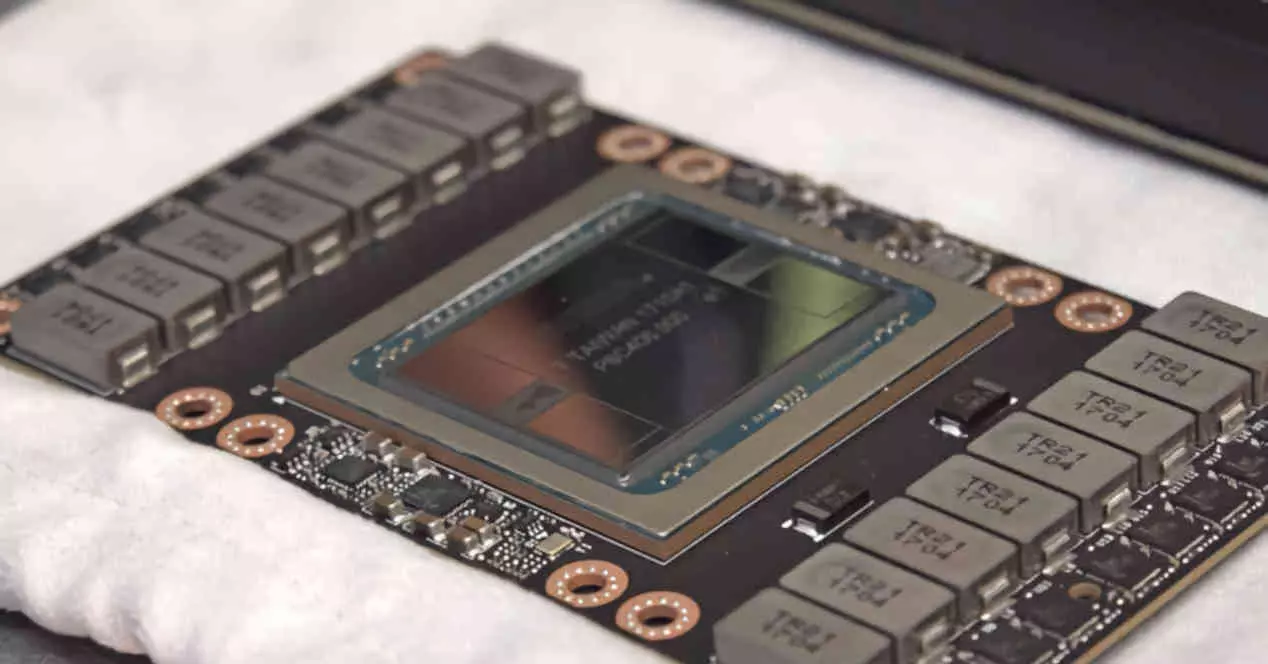

In the consumer market, for example, it is not possible to sell a large GPU, because what customers can pay is very little, nor can extremely expensive types of memory such as HBM memory be used. Which despite being better in two of the three variables (size and consumption), lose in terms of costs compared to GDDR6 memory, which is much cheaper.

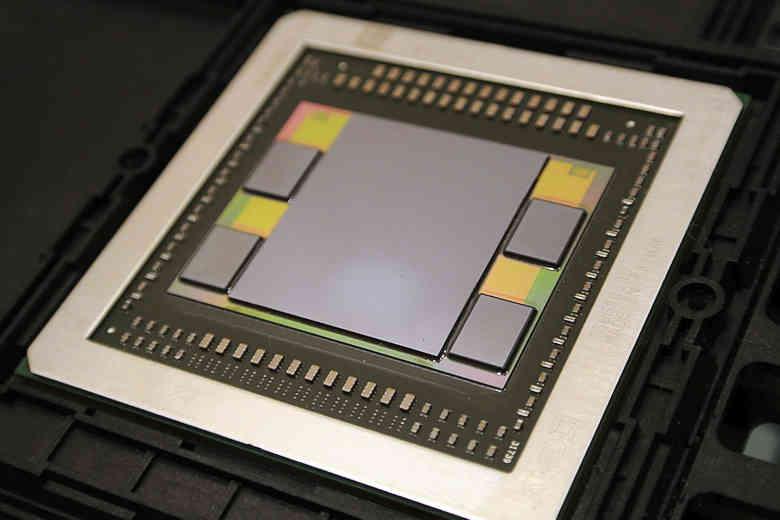

The cost limitation does not occur in the case of HPC GPUs, since they are designed for a market where high costs make the use of HBM-type memories profitable. Hence, GPUs of this type usually use this type of memory instead of GDDR6. If your customers are willing to pay the cost for a better type of memory then they will.

The other reason is that supercomputers and data centers that make use of HPC GPUs is the fact that they usually have their energy consumption already previously budgeted where each watt used has to be used to the maximum. Due to the fact that HBM memory has a much lower consumption in pJ / bit than GDDR6 it has ended up imposing itself in that market and this has made all future HPC GPU designs based on the use of this type of memory, the which requires the use of interposers and therefore 2.5DIC structures.

Different markets, different needs

GPUs to render graphics make use of a series of units that we call a fixed function due to the fact that they are not programmable. His work? Perform certain repetitive and redundant functions when generating the real-time graphics that we see on the screen. HPC graphics cards are different, many of these units do not need them and therefore do not use them.

Typically the simplest change to converting a Gaming GPU to an HPC GPU starts with blinding the video output. But as we have seen in the other two sections, HPC GPUs go beyond that. What is true is that manufacturers tend to leave these types of units in their HPC GPUs. Which is paradoxical to say the least, especially in the case of the GPUs used in the NVIDIA Tesla where the different units are not used. AMD, on the other hand, in their CDNA-based AMD Instinct have completely eliminated them from the equation.

Why are unused units still there? Well, due to the fact that they take up little space, they are turned off when they are not used and it is a bigger job to remove them from the middle and reconfigure the entire design without them than to maintain them. After all, they are not complete processors and take up very little space. Enough not to disturb despite being there. Although the biggest change is in the graphics command processor, most HPC GPUs have that unit disabled and therefore cannot process the display list created by the CPU to generate graphics.