Artificial Intelligence (AI) continues its rapid advancement, pushing the boundaries of technology and computation. The progression from OpenAI’s GPT-3.5 to GPT-4 has showcased significant improvements in capabilities and potential applications. However, this advancement comes with a substantial increase in resource consumption and costs, raising critical questions about sustainability and accessibility of AI technologies.

Understanding the Resource Intensity of AI Development

The shift from GPT-3.5 to GPT-4 required a dramatic increase in computational resources, with GPT-4 operations demanding ten times the resources of its predecessor. This exponential growth in resource demand highlights the broader implications of scaling AI models, especially as we anticipate the development of future versions like GPT-5.

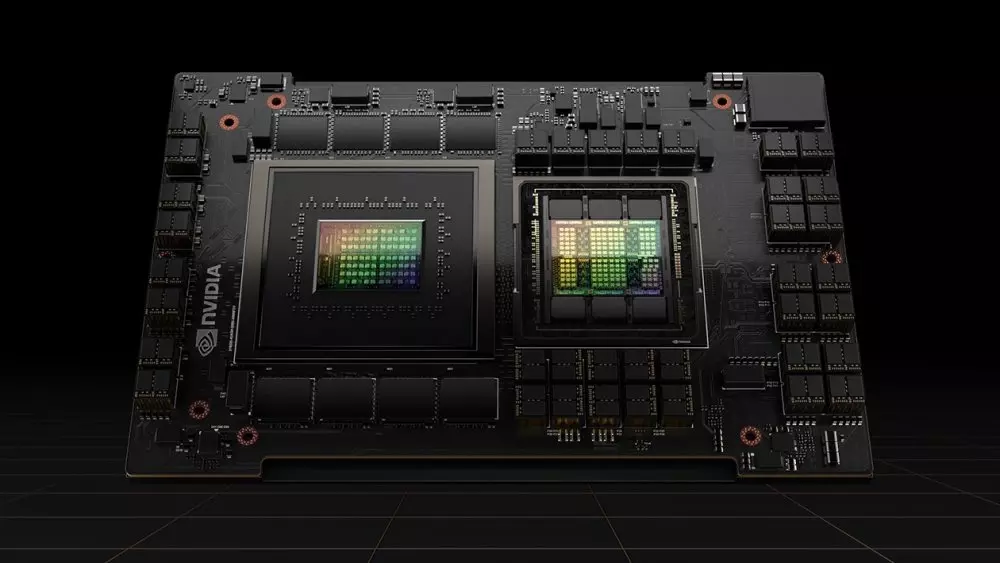

Energy Consumption: The energy required to train these sophisticated models is staggering. Predictions suggest that if GPT-5 were to be developed, it could require resources equivalent to 1 million NVIDIA H100 GPUs operating for three months—a scale of operation with significant energy implications, comparable to the annual energy consumption of entire countries.

Cost Implications: The cost of training large language models (LLMs) has also skyrocketed. Estimates suggest that the current cost to train an LLM can reach up to $1 billion, with potential increases up to $10 billion in the near future. These figures not only highlight the economic burden but also suggest a future where such technologies could become exclusive to wealthier corporations or entities.

The Future of AI Development: Sustainability and Accessibility

As AI continues to evolve, the industry faces a dual challenge: How to manage the escalating resource demands and ensure that AI technologies remain accessible and sustainable.

- Technological Innovations: Future advancements in AI could focus on increasing the efficiency of models through better algorithmic design and more sustainable hardware solutions. This could help mitigate some of the energy and resource demands of training AI models.

- Regulatory and Policy Measures: Given the significant energy implications, there may be a need for regulatory frameworks to guide the sustainable development of AI technologies. This could include policies aimed at reducing carbon footprints and promoting green energy solutions in data centers.

- Economic Models: The high costs associated with AI development could lead to new economic models that prioritize access and equity. This might include shared resources, open-source frameworks, or subsidized access to ensure that AI benefits a broad spectrum of society.

Conclusion

The development of AI like GPT-4 and its successors presents a complex array of challenges and opportunities. As we look towards the future with technologies like GPT-5 on the horizon, the AI community must balance the drive for innovation with the imperative for sustainability. This will ensure that AI continues to serve as a tool for advancement without compromising the environmental and economic stability of our global community.