The concept of leveraging artificial intelligence locally, particularly through tools like ChatGPT, has recently gained traction among tech enthusiasts and professionals alike. A standout example of this trend is the initiative by StorageReview, which utilized a NAS setup enhanced with an NVIDIA RTX A4000 to run ChatGPT entirely offline.

This approach underscores a growing interest in maintaining privacy and control over data while harnessing the power of AI.

The Advantages of Local AI Implementation

1. Enhanced Privacy and Security: Running AI locally, such as ChatGPT, on a NAS system equipped with robust hardware ensures that sensitive data remains within the confines of a private network. This setup mitigates the risks associated with data breaches and external threats, making it particularly appealing for environments that handle sensitive or confidential information.

2. Customized AI Solutions: Local deployment allows for the customization and optimization of AI tools tailored to specific organizational needs. Companies can fine-tune their AI systems without depending on cloud-based services, leading to potentially better performance and integration with existing IT infrastructures.

3. Reduced Latency: By operating on a local network, the response time of AI systems is significantly decreased, providing faster and more efficient handling of queries and data processing.

How StorageReview Built Their Local AI

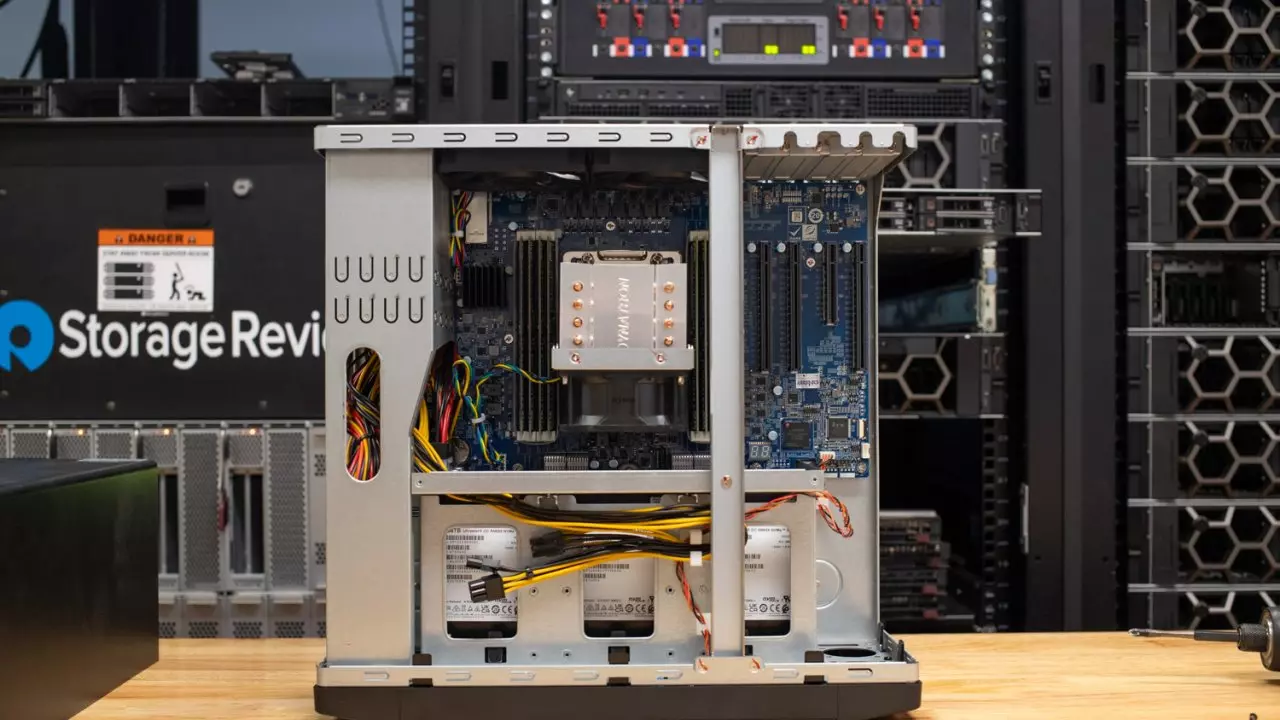

StorageReview’s project involved the QNAP-TS-h1290FX NAS, powered by an AMD EPYC processor with 256 GB of RAM and 12 NVMe slots. This high-performance setup was crucial for supporting the intensive computational demands of running an AI like ChatGPT.

The inclusion of the NVIDIA RTX A4000, a professional-grade graphics card equipped with Ampere architecture and capable of a single PCIe slot installation, was critical. This GPU supports NVIDIA’s “Chat with RTX” solution, enabling the NAS to run ChatGPT locally with substantial processing power, thanks to its Tensor Cores.

Practical Applications and Accessibility

This type of AI setup is not only suitable for large corporations but can also be implemented in smaller businesses and even at home, provided there are sufficient resources. The requirements to replicate such a setup include:

- An NVIDIA RTX 30 Series or 40 Series GPU with at least 8 GB of VRAM.

- At least 16 GB of RAM and Windows 11.

- A minimum of 35 GB of free storage space.

For those interested, NVIDIA’s “Chat with RTX” tool is available for download, currently in its early stages (v0.2), offering a practical way for individuals to experiment with and learn from setting up their own local AI systems.

Conclusion

The move towards local AI deployment represents a significant shift in how we perceive and utilize artificial intelligence technologies. By hosting AI solutions like ChatGPT on private networks, organizations can ensure greater security and tailor solutions to their specific needs, without sacrificing the immense benefits of AI.

This trend not only opens up new possibilities for data privacy and security but also democratizes access to advanced AI capabilities, allowing more users to explore the potential of AI technology within their own infrastructures.