It is likely that if you are a big fan of hardware, or if you have studied something related to information technology, at some point you have heard of Amdahl’s Law . In any case, if you are curious in this article, we are going to explain what it is and how it affects computing that we all know, and more specifically hardware.

The Amdahl Law is named for Eugene Amdahl , the computer architect who formulated the law. In modern computing and more specifically for development, it is used to find out the improvement of a system when only part of it is improved. Does it sound Chinese to you? Well read on, we will explain it to you in a simple way shortly.

What is Amdahl’s Law and what is it for?

The definition of this law establishes that: “The improvement obtained in the performance of a system due to the alternation of one of its components is limited by the fraction of time that component is used.”

Above you can see the formula, where:

- Fm is the time slot the system uses the enhanced subsystem.

- Am is the improvement factor that has been introduced into the system.

- Ta is the old runtime.

- Tm is the improved runtime.

This formula can be rewritten using the definition of speed increase in order to calculate the speed gain (A):

With the definition of this law it is probable that you have stayed the same, so we are going to explain it to you in our own words; What this law tells us is that the performance improvement of a system (understanding a set of parts such as a PC) when you change a single part, is limited by the time that component is used.

In other words, with an example: the performance improvement of your PC when you change the RAM memory is limited by the time you are going to use said component. Now better, right? But this will surely raise another question, what will the time you use the component have to do with performance improvement? To understand it, let’s see another example.

If in an PC program the speed of execution of an algorithm (such as the operation of a processor) represents 30% of the execution time of the total time it takes for the program to execute the command, and we manage to make that algorithm run in half the time we will have:

- Am = 2

- Fm = 0.3

- A = 1.8

Which means that the speed of execution of the program will have improved by a factor of 1.8 and that only by modifying one of its subsystems.

What is this law for in computer science?

After telling you the theory, what is this law for in practice? Basically used by system engineers, both hardware and software, simply to define whether or not making an improvement to your system is worth it .

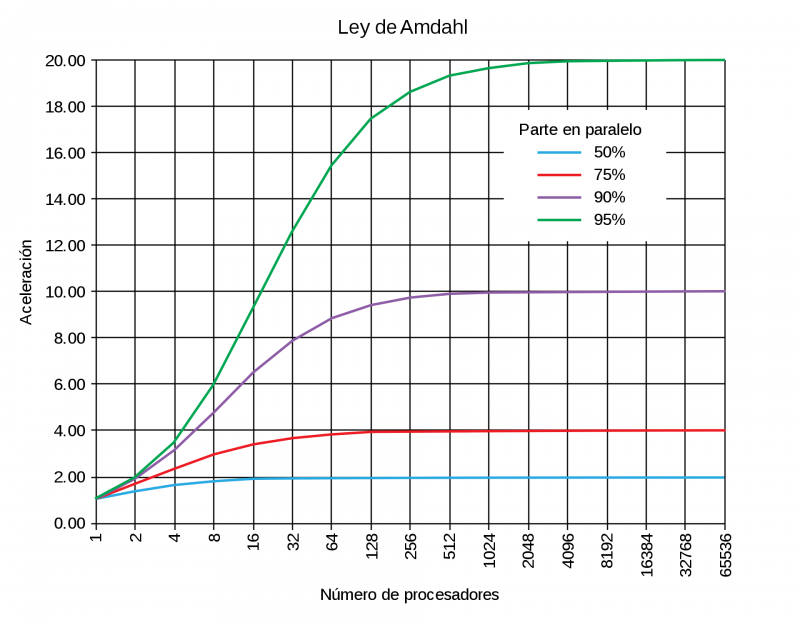

Since the speed increase of a program using multiple cores of a processor (or multiple processors, it does not matter) in distributed computing is limited by the sequential fraction of the program, with Amdahl’s Law they can no longer only calculate, but graphically see if improving the performance of one or the other component will or will not be worth it, and to what extent.

In this way, they will be able to see, for example, if improving the performance of the transistors of a processor by, say, 50%, will be worthwhile as the number of transistors grows and for how long they will be used in each of the operations that run the processor.