Sound cards have almost completely disappeared from the average PC to be completely integrated into our hardware and the feeling is that there has been no evolution in the last two decades. However, this could change in the medium term. Will this hardware come back again? What changes will we see in audio in the coming years? Read on to find out.

As of today, sound cards have completely disappeared from the scene. The reason for this is that the processors are powerful enough to perform most of the functions that they did before. The only thing that cannot be emulated? The functionality of the DAC/ADC and other elements that depends on purely analog electronics. However, for some time now we have found that the general feeling is that audio is given less and less importance and it has reached the point where general quality is suffering.

Integration of sound cards

Normally, when a function is a load for the central processor, it passes to an accessory coprocessor specialized in doing that task more efficiently. However, there are times when a creep effect occurs and this is when the accessory hardware stops evolving in specification level and is integrated until it is no longer important. Which has been the case with sound cards. In other words, today the work that was previously carried out by a small audio DSP chip is now carried out by the central processor . Although some motherboards of certain brands have one of these chips, they are not usually fully used in video games. Rather, it is a resource that, due to its little evolution over time, has been totally stagnant.

The future is spatial audio

When you talk to people about much more powerful audio for gaming, the first thing that comes to mind is 5.1 and 7.1 speaker systems for surround sound in the living room or game room. However, in games we have dozens, if not hundreds of small audio tracks to create the entire environment around us and that are processed today by the CPU. Which do not seem to have problems managing such an amount of information, but this is due to the limited capabilities they have.

In recent times, the concept of surround sound according to the position of the user has also become very fashionable, especially thanks to the accelerometers and gyroscopes integrated in some high-end headphones . Something that in theory could be exploited. That is, take advantage of features such as the spatial audio of the Apple AirPods, for example.

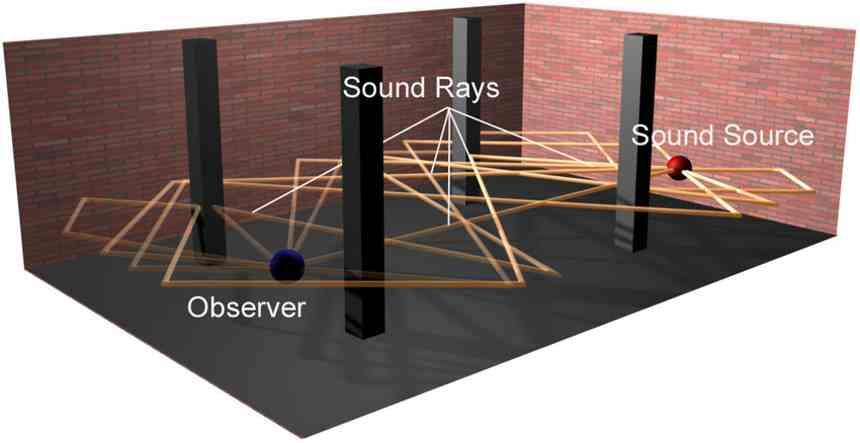

However, this means adding a new layer of complexity to the games, where Ray Tracing will be key in all of this. But what does anything related to graphics have to do with sound?

Ray Tracing Audio

This is not new, but if what ray tracing does is represent the journey of light in three-dimensional space, the concept of audio Ray Tracing is the same, but with sound. That is, to be able to represent the sound waves journey in a virtual environment and always taking into account the user’s position in the game . In other words, the sound would be calculated every frame.

This would help create a more immersive environment, where dozens of audio channels would be generated at any given time based on distance, player position at any given time, and surrounding faders. Not only would the most realistic virtual environments be created for our eyes, but also for our ears. However, we lack the proper hardware to be able to process all that sound in real time and translate it into a quality leap in the experience. Due to the poor specs of current sound cards and the overhead that would be on the processor.

Audio from graphics card?

Obviously, one of the proposals by NVIDIA and AMD is to run audio via ray tracing on their graphics cards as part of the computing pipeline. This consists of taking advantage of the excess power of the GPU to perform support tasks such as the calculation of physics in games or even in some cases manage the audio. Although this is something that despite the fact that it is possible to do, many developers do without it.

But are graphics cards suitable for processing audio? The problem comes if we take into account that in games , taking power away from the GPU for things other than generating frames means fewer frames per second. The ideal would be to have powerful hardware that could handle everything at the highest quality, but compromises end up being made in order to economically democratize PC games.

However, GPUs are not perfect for real-time audio processing and we have to take into account a number of limitations when processing the data. The reason? Graphics processing requires large amounts of memory, and thus bandwidth is given importance over latency. Sound, on the other hand, is the other way around , requiring small blocks of data with the lowest possible latency. So it is the CPUs that today are more prepared to execute the sound.

More cores in your future CPU, the future sound card

Since no user will add extra cost in the form of an advanced sound card, it is clear that the way to calculate audio in games will come from the processor . Currently, the data structures used by Ray Tracing in games are generated from the CPU, although they are then copied to the memory of the graphics card. The fact of having a copy of this information in each frame in RAM will be what will allow the additional cores of each processor to be used to generate surround audio.

We have to start from the fact that in a few years earpod-type headphones will be accessible to everyone and CPUs will have increased the number of cores. So the immersive spatial audio will be what will take a giant leap. The highest that the audio in games will have given in the last 20 years in an aspect, which has been stagnant for more than a decade.